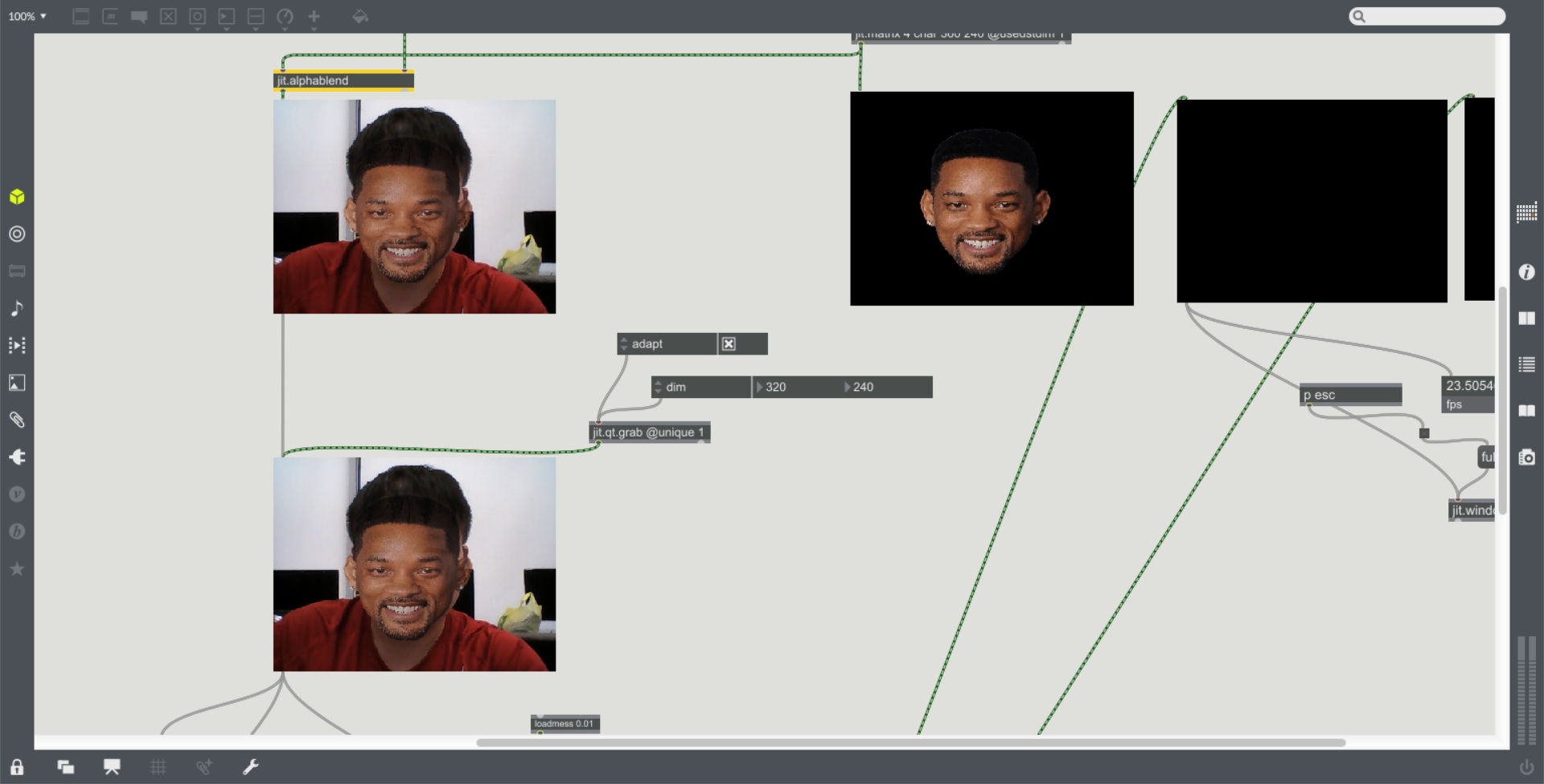

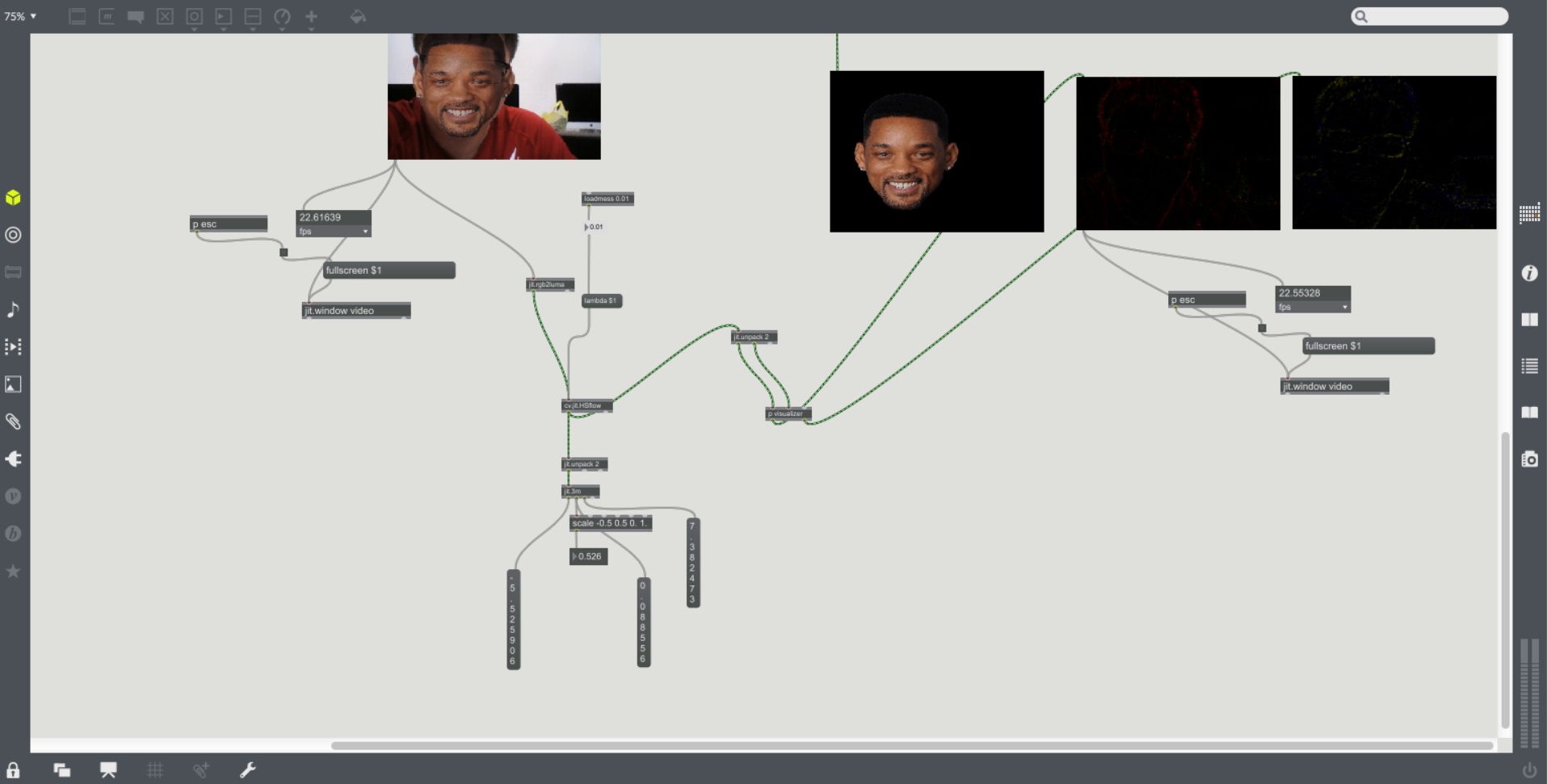

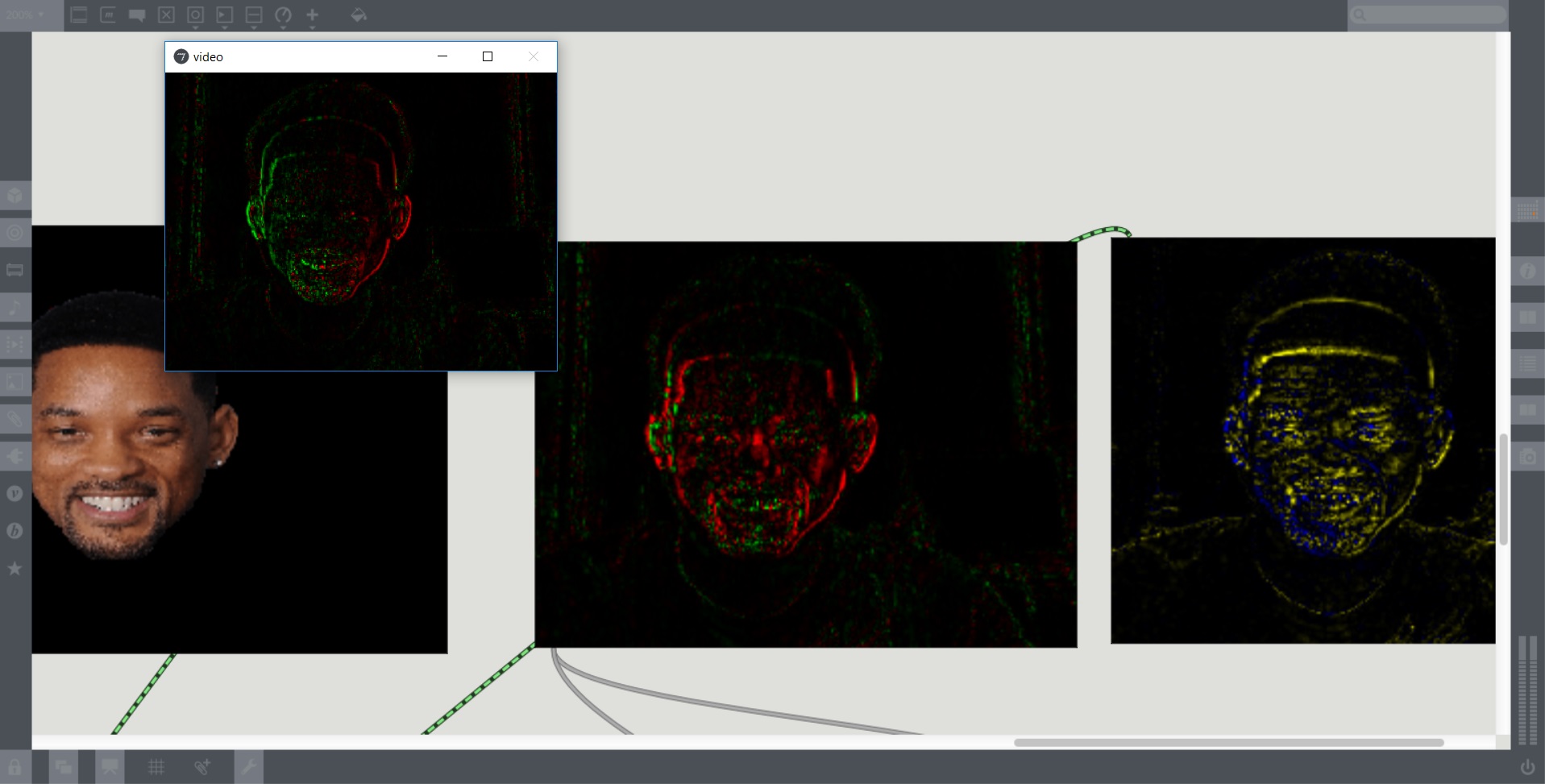

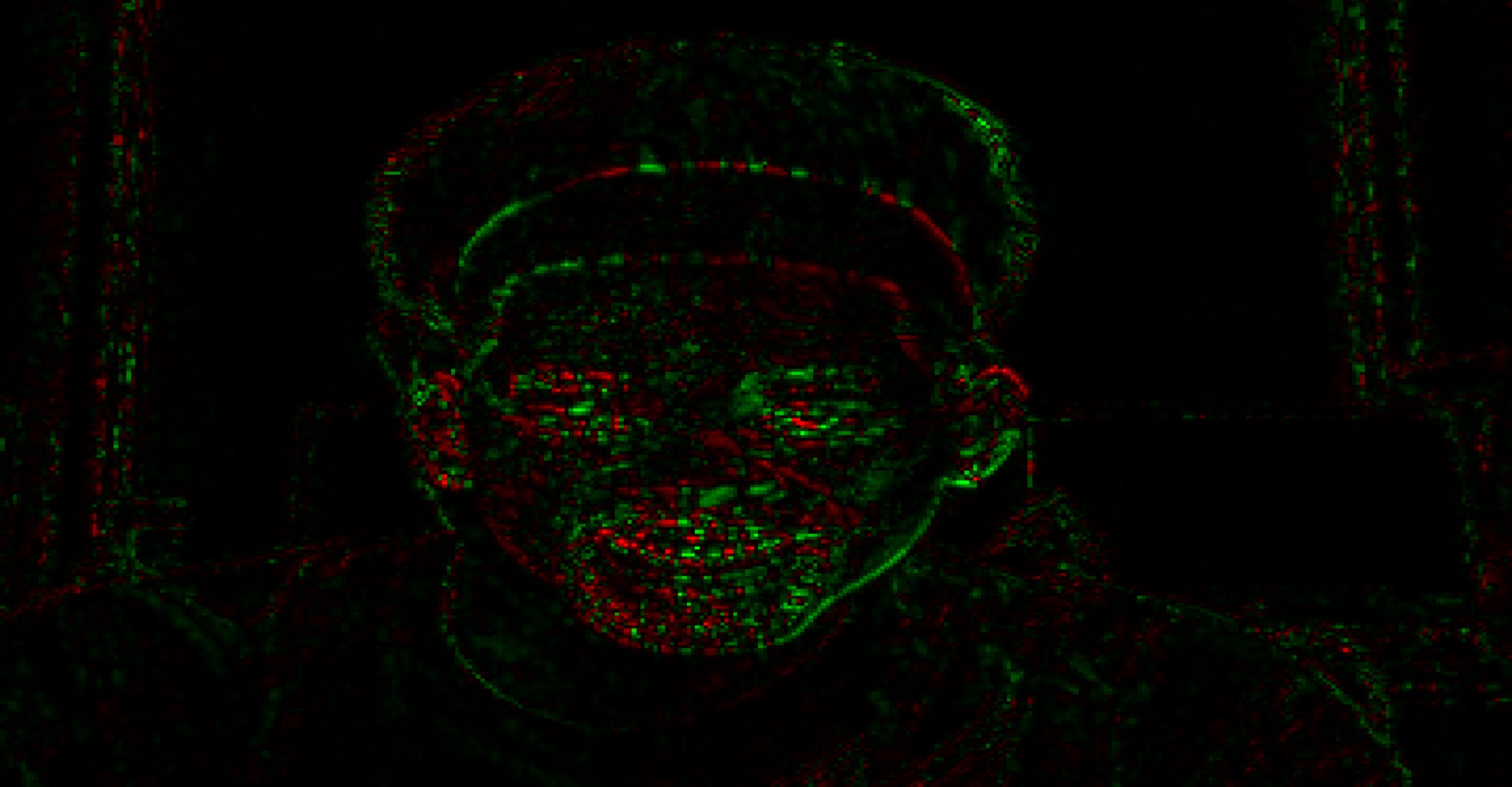

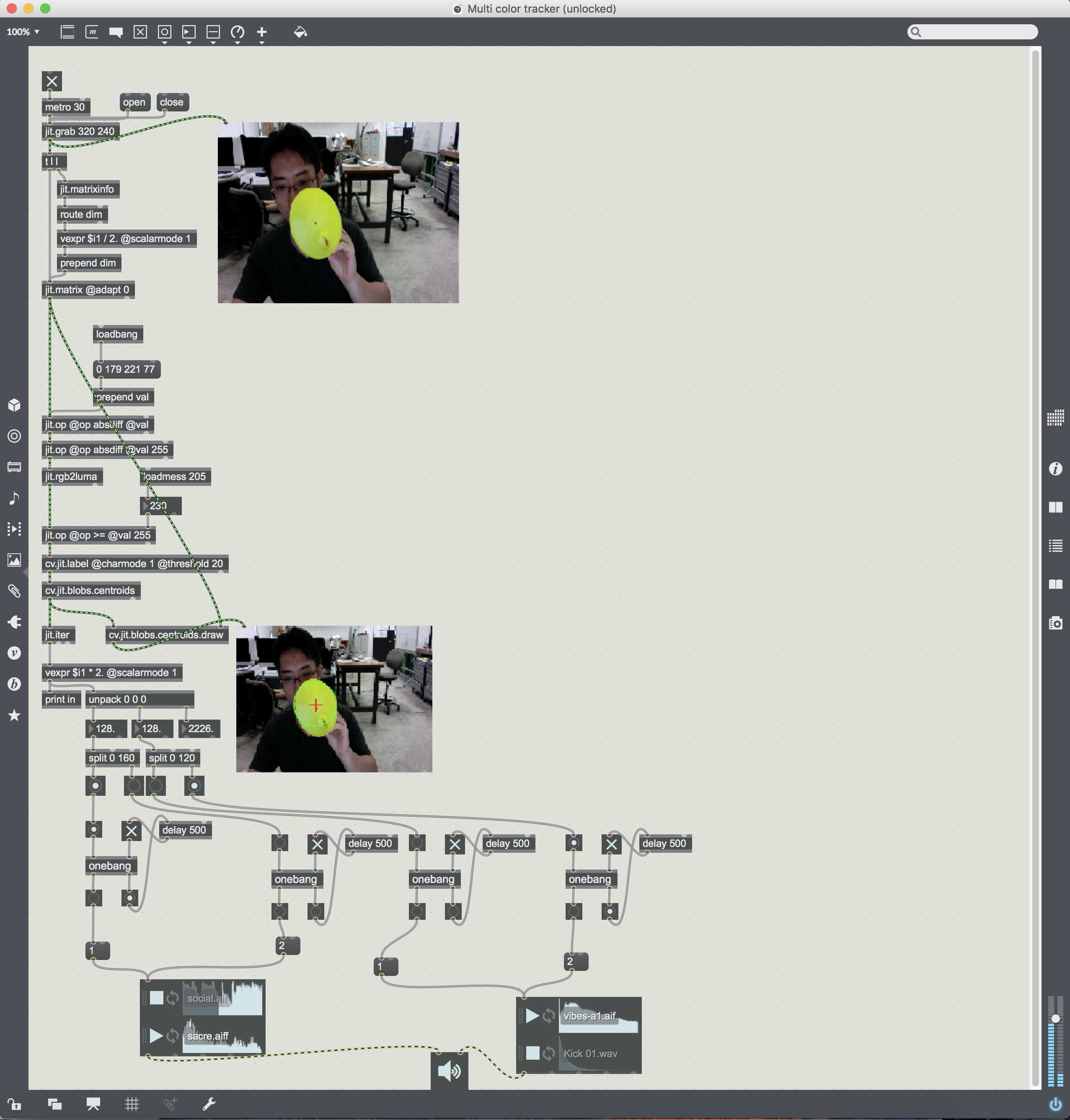

MEMORIES OF SOUND

Bao Song Yu & Zhou Yang

This is the video documentation for our final project presentation. We were glad that it invoked responses from the people that were watching the interaction happening between the installation and its participant. Many people were taking videos and photos of the participant’s actions. This was the ideal scenario we wanted to achieve. The interaction between the installation and the participant and another form of interaction between the bystanders and the participants.