Concept

Initially, it was to make something fun to interact with. Something subtle yet catching based on noise/sound.

Then we decided to go with motion tracking due to the loud and unpredictable ambient noise in North Spine.

In the end

Finally, we decided to go ahead to give Wally a personality; an identity for himself.

He is shy, so do try to be patient with him . . . and what’s to come.

This post will focus more on the technical progress of this project.

Processing

For this project, we used Processing 3 and had done a number of effects, credits to many people that helped – Daniel Shiffman, Thomas Sanchez Lengeling, Issac, Kapi, and Yasmine.

Components of the code:

Final code pt1:

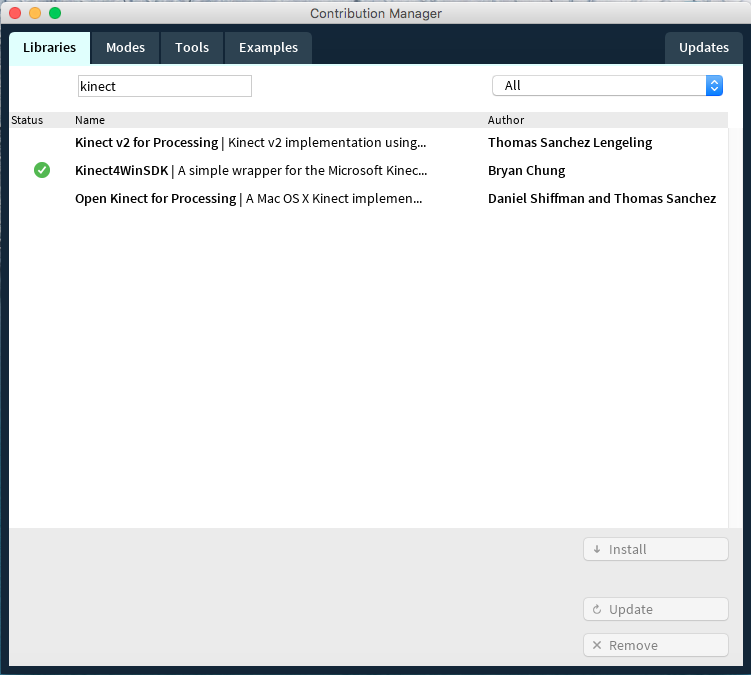

Kinect Library:

Used Thomas Sanchez Lengeling’s library’s skeletonColor sketch as our code’s skeleton

Only left with the code that tracks the head.

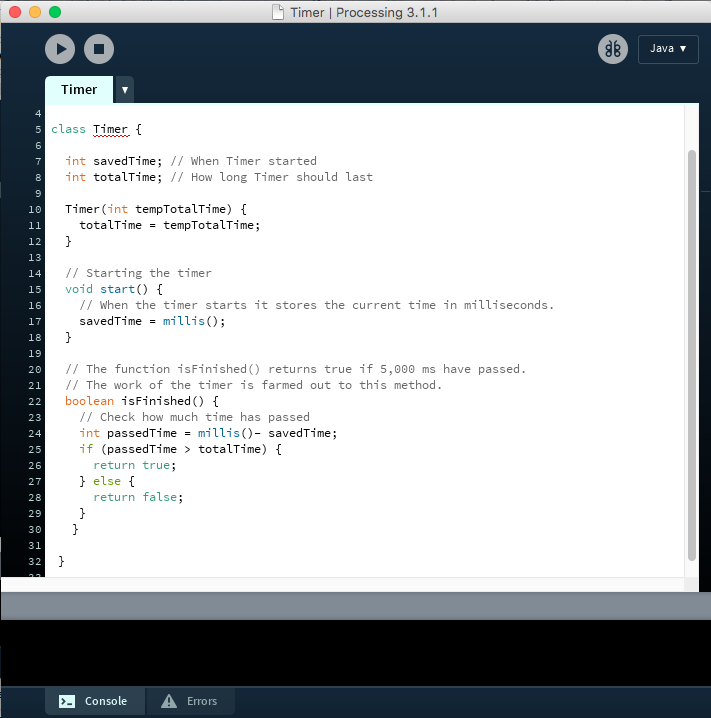

Timer:

Part of the work is time-specific, so we used the code by Daniel Shiffman to help us countdown to give the effect that we want.

Images and Videos:

We input the video and image files rendered by Chris into our Processing sketch to provide the meat of the work.

x-coordinates: To trigger our interaction, we placed the condition such that at a certain position that the camera is detecting will trigger the next part/video of our work.

Making Wally react to our interaction.

Demo

Issues

Doing a ‘shivering’ effect:

It showed fear rather than being shy.

So we took it out.

Kinect Library

Through the semester, we had an issue with trying to get the kinect mapping movement to our work. Initially, we had a library that worked, but needed an external driver to operate. This caused other softwares to not link with the kinect. So we decided not to use that library anymore.

Then, Kapi pointed out the library by Thomas Sanchez, which we then adopted for our code’s foundation.

As most of the code was done previously, it was easier to ‘transfer’ them to this code.

For the progress and process of the visuals, click here.