Menu

DM2000: The Impulse Group Members

Ng Yen Peng Wendy, Li Yihan Vanessa, Jonathan Ming Chun Yu (IEM/4)

Chapter 1: Introduction to the Impulse (⇡ Back to Top)

As the semester is coming to an end, our DM2000 group is proud to present to you our final semester project entitled “The Impulse”. The fundamental objective of our semester project is to

1) Map specific hand movements to trigger either a note, tune or audio file that will be outputted from Ableton Live. Users can choose to add different beats into the audio mix, effectively allowing them to create their own music depending on how many instruments and triggers the user aims to set.

2) Allowing the user to generate large visual graphics that will appear in the projector in front of them. The visualization in 3d space easily changes shape, colour, zoom effect etc with a simple wave of hand(s) depending on how the user wants to control the trigger.

In order to fully understand the complexity and conception of our semester project, it is important to explore each of the technical functions and briefly talk about how they operate as a standalone programming tool in order to achieve such effect. The following are the technical functions we would be showcasing to you. (How each of these components work would be briefly explored in chapter 3):

1) Synapse

2) Ableton Live 9

3) Quartz Composer

4) MAX/MSP/Jitter + Syphon

5) Demo Video

Chapter 2: The V Motion and Bubblegum Sequencer(⇡ Back to Top)

The V Motion Project

The main source of inspiration for our semester project is based on the idea of the V Motion which aimed to not only produce music through the use of motion and mapping of the beats/tones tied to a neatly organized visual interface that makes it easy for the user to tap the functions with quick session and give the feeling of a real time techno concert being orchestrated with the mere wave of a hand.

For V motion project itself, it required a manpower of about 20 people to do the many functions that can be done on the visual interface from the repetitive triggering of the single notes, the control of the audio’s frequency to even the latency of the triggers itself so there will be absolutely zero lag when the joint triggers the area whereby the audio gets played out.

The Bubblegum Sequencer

Besides the user given the option to trigger the respective audio or note with a mere touch, our semester project also has some aspects which bare similarity in concept to the The Bubblegum Sequencer that was shown in class as our project also incorporates synchronized beats that loop back and back, so long as the user has turned on the trigger for that audio. On top of that, the user is given greater flexibility over the different beats and tones he can mix together, so there’s certainly a lot of joy to be had being able to mix your own audio.

Chapter 3: Patches and Softwares (⇡ Back to Top)

1) Synapse for Kinect

For this project, Synapse is being used for Kinect to control Ableton Live, Quartz Composer, Max/MSP/Jitter, and any other application that can receive OSC events. It sends joint positions and hit events via OSC, and also sends the depth image into Quartz Composer. In such a way, it can allow you to use your whole body as an instrument.

http://synapsekinect.tumblr.com/ for downloads.

2) Ableton Live 9

Ableton Live 9 is a sequencer software that would be used to help map the triggers in order to play the respective audio or note files. Basically, we will be using Synapse (via Kinect) to read the person’s joints that would trigger the OSC event itself (it can be map to joints such as hands, elbows, shoulders depending on how the user wants to trigger the sound).

http://synapsekinect.tumblr.com/post/6307739137/ableton-live for Ableton Tutorial

On top of having Synapse installed, we also require a series of patches called Max For Live Essentials installed within Ableton Live 9. http://synapsekinect.tumblr.com/post/6305020721/download

In this section, 3 very simple video tutorials on how to map the triggers to the audio. Our group used three common triggers for this project for the triggering of the music. Refer to the video situated above the description to understand the mapping that is to be triggered.

A) Max Kinect Event

This would map a single joint (moving your right hand towards your right for this tutorial example) to trigger the audio file you want to trigger. You will need to have Max Clip Launcher alongside the Max Kinect Event patches to run the audio file.

In this example, the clip launcher track is directed towards the track you wanted to play and afterwards you are mapping the right hand right trigger to the launch button of the clip launcher. This would allow you to trigger the launch button (that plays the audio file) every time you point your hand towards the right.

B) Max Kinect Double Event

This would map two joints that must get activated simultaneously (moving your light hand towards your left and your right hand towards your right for this example) to trigger the audio file you want to trigger. You will need to have Max Clip Launcher alongside the Max Kinect Double Event patches to run the audio file.

In this example, the clip launcher track is directed towards the track you wanted to play and afterwards you are mapping the left hand left and right hand right simultaneous trigger to the launch button of the clip launcher. This would allow you to trigger the launch button (that plays the audio file) every time you direct your left hand towards the left and your right hand towards your right in complete unison.

C) Max Kinect Dial

This would map a joint (right hand in this example) to any particular dial (For example, Frequency, LFO, Volume etc.)of the waveform of the audio file itself. Once you turn on using the Max Notedial, you can control the dial of the function by just simply waving your arm up and down.

In this example, you will need to insert a new MIDI track as shown in the video. Afterwards, drag the audio file into the MIDI track. You will need to use Max Notedial alongside Max Kinect Dial. In the Max Kinect Dial, you will select y-coordinate and body relative (you can play around with the other coordinates) and afterwards map to any of the dials located in the sound file next to it. Afterwards, turn the button on the note dial on to observe the changes of the tone when you move your arm up and down.

Composition of Background Music

In order to enrich the overall audio effect, and to compensate the user interaction lag as a result from all the different programs running together simultaneously, a piece of background music is composed. And the exported Waveform music Buzz3 of this background music, is later loaded into audio track #7 of UltimateFinalBuzz.als

Even though the clips are triggered instantaneously by its mapped gesture, due to many programs running at the same time, it leads to the 1~ 2 bars delay of the music. For future development we want to run it on separate PCs that has a much powerful processor and also additional Kinects for detection accuracy. To further develop this project, clips like DRUM_Kick G, FX_Impact1, FX_Sweep Up could be mapped to other unused gestures or Max OSC control events and to eventually remove the background music, which allows users more freedom for experiment.

The Max OSC Control is the Max for Live effect that allows Ableton to hear from MAX, and after modification we could send the index for region that user is tapping to Ableton for it to listen. However, we couldn’t troubleshoot one issue, which is that this Control effect will only trigger a Max Clip Launcher once. It is necessary to look into its logic again.

3) Quartz Composer

In this project Quartz Composer captures the contour of the figure and creates a square mesh under city light effect on the bright edges. This is achieved by a powerful QC plug-in named v002–rutt-etra, which could be found at http://v002.info/plugins/v002-rutt-etra/

Quartz composer reads in joint position and movement information using qcOSC plug-in (Figure 1), which read from port 12348 from Quartz_passthrough_plus (Figure 2). In the demo video due to limited port, we have to give up the warfare particle effect, which is generated and move along with hand position. (Layer 7 & 8). Layer 5 & 6 is a simpler version of particle generating. During this process the coordinates have to be carefully mapped to correct screen scale, and math calculations are carried out.

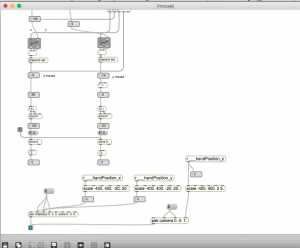

4) MAX/MSP/Jitter

MAX to receive joint positions (x,y,z) from Synapse via udpreceive on port number 12347.

Sending OSC Messages from MAX Kinect to Ableton Live

MAX to detect hand position hit events, and sends it to Ableton Live via udpsend on port number 22345.

Jitter Visualization in 3D

Instead of using matrices to manipulate the visualization, 3D graphics library and OpenGL is used to render high quality graphics at a much faster speed.

Visualization was created using gl.gridshape that renders to a GL context, with git.gl.mesh (drawing element) to create mesh visualization on the same GL context, and lastly the render shows up in a jit.window.

The colors for visualization are randomly generated using a mood machine that sends the output as a texture. By using a time ramp, slow gradual color change was generated to apply to the visualization.

In order to create a greater visual impact upon the player, the right hand position was mapped to control the jit.gl.render camera’s position. The camera’s x & y rotation is controlled by the hand’s x & y position while the camera’s z position is controlled by the hand’s z position.

Basically, combining different layers using jit.gl.videoplane (layer1 and layer2) in 3D space. jit.gl.texture puts the visualization as a texture (layer1) and overlays one layer on top of the other. Layer 2 will be the audio track histogram with captured from the audio input. However, in this case, the audio playback will not be triggered because we will be triggering audio feedback from Abelton Live via OSC message to detect hand position hit events.

5) Quartz Composer with Jitter – Syphon

Changing the synapse depth image to Quartz Composer silhouette effect. Using a Syphon client, the max patch receives the Quartz Composer silhouette effect and adds it as a video plane texture to overlap on top of the visualization. Blending mode can be adjusted to make the colors of the visualization look richer. In this case, blending mode add was selected.

Syphon Server for QC, a consumer patch, executing on a specific layer. The output is patched to the Syphon Server’s image port, and the source set as “OpenGL Scene”, which will capture any content rendered into the Viewer.

Compositions can use any number of Syphon Clients and Servers, and users can load numerous compositions that leverage Syphon.

http://syphon.v002.info/ for downloads.

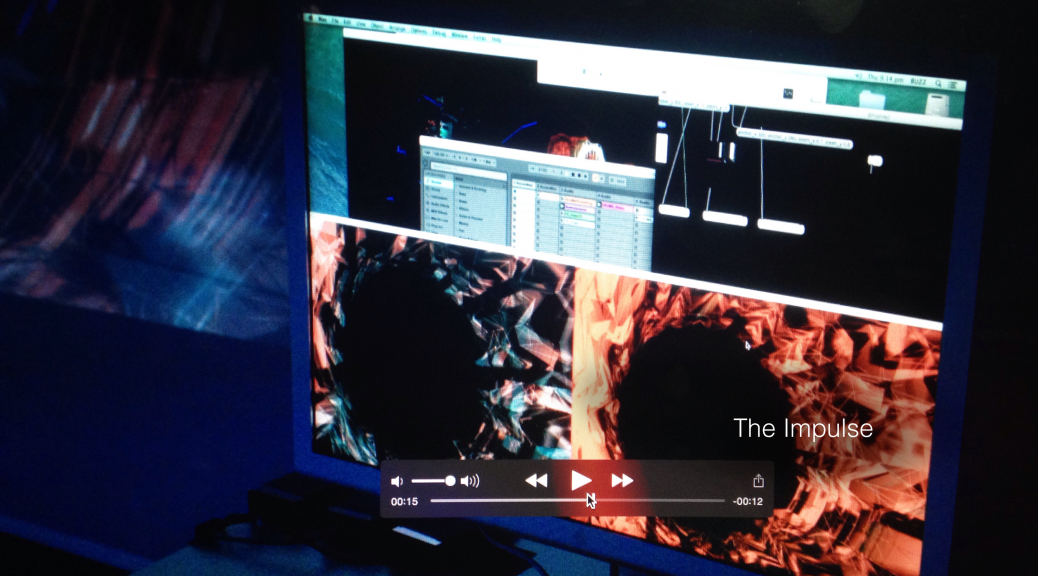

Chapter 4: Demo Video (⇡ Back to Top)

In our summary demo video, we divided the scenes of our short demo video into three sections which is explained in further details below. Hope you enjoy them!

Click Here for a downloadable link to our demo video:

A) The Preparation Before Our Demonstration.

In preparation for the final demonstration to our class and the professor, our group went through meticulous thorough checks to ensure that all the triggers for the audio files would be played at the proper location that was set at the Ableton Live as well as to ensure the proper projection of the visualization onto the projector itself as we intended to perform the Impulse project at the Basement 1 studio because the place had three large projectors within a closed environment (as the music that was going to be played would be very noisy.

B) Snippets of the Project Demonstration

Here are some snippets of the different audio sounds that can be produced with different gestures as well as the general overview of how the visual layout should react when the hands are moving about.

C) Full Live Demo of The Impulse and Audience Interaction

Here is a full scene demonstrating how the Impulse works.

The greatest interaction of “The Impulse” comes from the fact that users are encouraged to step forward to create their own dance as well as composing own musical tunes. This is supposed to keep up with our project’s energetic joyous feel throughout, akin to allowing anyone to be their own DJ in a disco party. If we were to expand our audio mapping further, we would like to integrate more intricate functions such as a 360 degrees audio and visual interface panel to control the tempo of the music being played. We could also increase the number of playable beats (or perhaps with different instruments).

Ng Yen Peng Wendy, Li Yihan Vanessa, Johnathan Ming Chun Yu (IEM/4)