Fortune Taker

In this project, we set up a booth where we invite people to get their fortune read by a virtual fortune teller.

Little did they know that we are out to scare them and capture their reaction.

Conceptually, this project was originally out to capture photographs of people whenever they enter a room, in a form of paparazzi style photographs. This was to question the idea of privacy and we wanted to post those photographs on twitter. Fortune Taker was born after much discussion on how to make the project stronger.

We have the strobe light to light up the face of the participants and also add an element of surprise.

The iSight camera was used to capture photographs and video of their reactions.

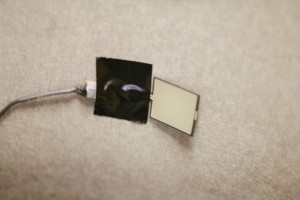

The tea box sensor was used to activate the video when the participant lean back onto their chair.

Headphones to help the participants to immersed themselves better.

Two separate computers and patches were needed to make this project a successful one.

Below is the PDF File of our presentation.

Fortune Taker presentation

Above is the video of our overall process.

Done By: Cheong Su Hui & Kamarulzaman Bin Mohamed Sapiee