I really like the outcome of our Final Project very much.

This is the link for our video wall, Video Wall on Third Space Network

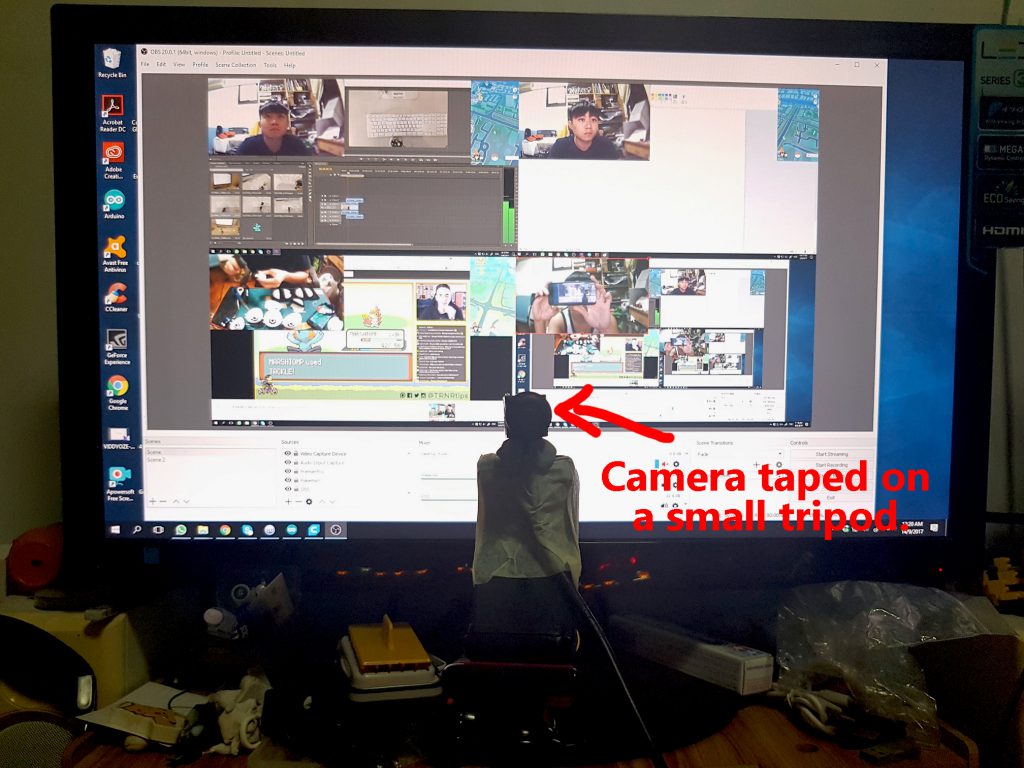

This is a Screen Record of the Video Wall on Third space Network.

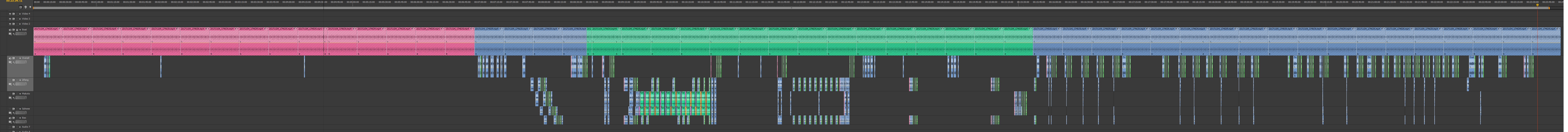

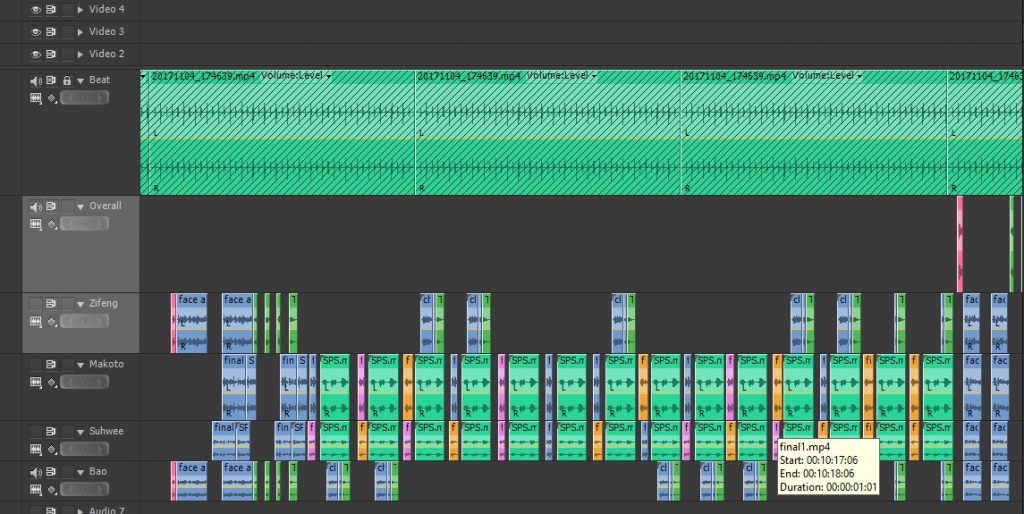

And This is What I did before the wall was made in Premiere Pro to get a general feel of what it will look like and to get the exact timing to start each video in the video wall.

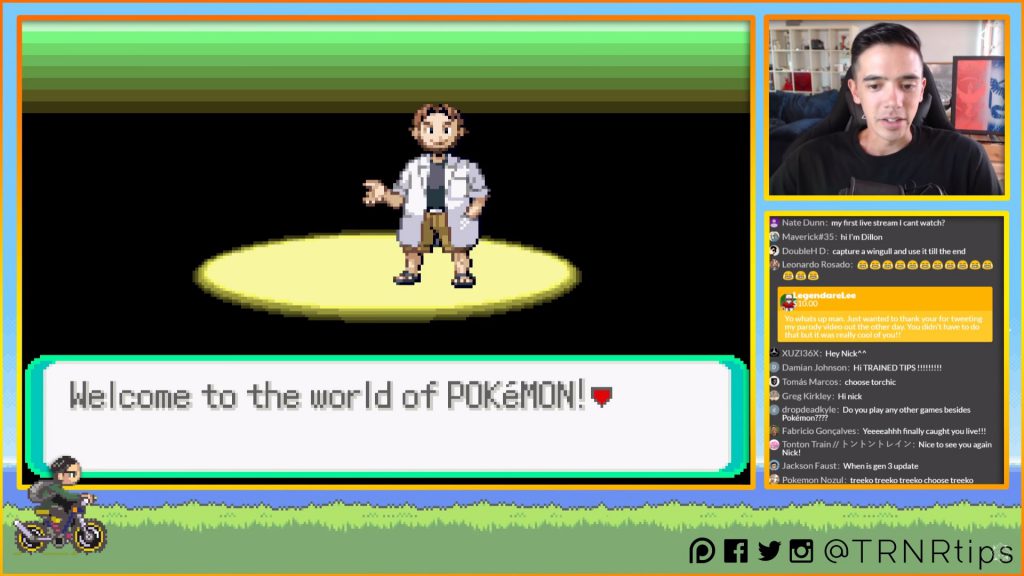

Lastly, this was my Individual Facebook broadcast, Just to re-emphasis on the point, what made our project really interesting was the video wall, every individual Broadcast doesn’t seemed like much, but when we are linked with the rest, we will produce a piece that is much more than itself.

Posted by ZiFeng Ong on Friday, 10 November 2017

we are the third space and first space performers.

The main purpose of our project planning was to begin with the end in mind – To present the four broadcast in a video wall that will be interesting for the viewer to watch, we will be the third space performers by having our broadcasts interact with each other in the third space. Our final presentation in class will be a live performance and I am not taking about the fact that everyone will watch what WAS BROADCASTED LIVE, nor all the audience in class will be watching it in THE PRESENT, but as the timing to click start each of the video must be precise to the miliseconds and will not be replicable when anyone play it again by themselves later as Telepathic Stroll will look vastly different if the time to click play each video are slightly different, so we are making a Live Performance by playing the four video in a way that we think it is for the optimum viewing sensation.

how did we perfected our timing even when not being able to see each other?

This is the reveal of our broadcasting secret! GET READY! CLEAN YOUR EARS(EYES) AND BE PREPARED! We called our system the….*Insert drumroll in your mind*

“THE MASTER CONTROLLER”

so… what is the Master Controller?

Basically, it is a well crafted sound track that is 23 minutes which consist of drum beats that worked like a metronome for syncing our actions and careful instructions to tell each performer what to do at which exact moment and every performer has a personalized sound track because we are doing different task at the same time.

How was it made?

It was made in Premiere Pro and a web based-Text to Speech reader and I screen recorded when it was reading to extract the sound from it into Premiere pro. The whole process to create the soundtracks took more than 18 hours and I’ve lost count of the time afterwards.

So how does it works exactly?

On the basic Idea, we will have the instructions preparing us and telling us what to do like “Next will be half face on right screen. Half face on right screen in, 3, 2, 1, DING!” and we will have our actions/change of camera view being executed on the “DING!” so that all our action will synchronize.

It started when we were at the meeting point where we started the sound track at the same time, counting down to 7 minute to walk to our respective starting point and wait for broadcasting to start, first, I will start broadcasting and then Makoto, SuHwee and then Bao on a 5 second interval, this was done so to allow us to have control when we play the four broadcast in the class to achieve maximum synchronization, if we started the broadcast at the same exact time, we could not click play on all four video in class which will result in de-synchronization. After started the Broadcast, we filmed the environment which was to remove the difference the timing from 30 seconds to 15 seconds depending on the time we started the broadcast and have everything happening afterwards at the exact same time.

Afterwards, we will have different actions at the same time, like when Bao and me entered the screen, Makoto and Suhwee will be pointing at us, this was done by giving different set of instruction in the Master Command at the same time, since each of us could only hear our own track and there will not be confusions among individual and clear instructions were given although I was confused for countless of times during the production of the Master Command because I have to make every command clear and the timing perfect, and it includes countdown on certain actions but not those repeated actions. The hardest part to produce was the Scissor-Paper-Stone part in the broad cast as everyone was doing different actions at the same time

Towards the end of the Broadcasting, many of our soundtrack was specially instructing us to pass the phone to a specific person like Bao will have it saying “Pass the phone to ZiFeng. 3, 2, 1, DING!” and “Swap phone with Makoto. 3, 2, 1, DING!” this was done just to avoid confusion during our broadcasting rather than going “pass phone to the left” which is quite ambiguous.

Overall, there was a lot of planning and every details must be thought out carefully when I was making the tracks because every small mistake will affect our final presentation to not as good as it should be, I am lucky that I only made one mistake in the Master Command which was the direction where SuHwee and Makoto will be playing their Scissor Paper Stone with and we clarify it before our final broadcasting.

On the actual day of our broadcast.

The whole week will be raining according to the weather forecast so we did our final broadcast in the rain, luckily for us, the rain wasn’t too heavy and we could just film it in the drizzle. We started our broadcasting dry, and we ended our broadcasting wet.

We explored Botanical Gardens for a bit and decide the path each of us will walk and we walked it 3 times before the actual broadcast – the first time we walk when deciding where and how it will be like, second when we walked back, third was right before we started the broadcast as we walked 7 minute to our starting location so our first 7 minute into the broadcast will be us walking back the same path in the same timing.

we did a latency test by broadcasting a timer for two minute right before the broadcast and we could make some minor changes in our timing if there are some latency issue by calibrating the individual Master Controller to the latency before hand, but luckily for us, non of us were lagging and we had the best connection possible so there was no need to re-calibrate the Master Controller. Also, just to mention, Since Bao and I were having calibrated connection due to the previous Telematic Stroll (NOT Telepathic Stroll), he doesn’t have to calibrate with us again since I am doing it, so we filmed his phone ‘s timer.

Some recap of Telepathic Stroll:

our project inspirations.

Telepathic Stroll was highly influenced by BOLD3RRR and our lesson on Adobe Connect.

On the First glance, one can see the similarity in the association in Adobe Connect lesson with Telepathic Stroll, we had been pointing to the other broadcast, merging faces and trying to (act) interact with each others in the video wall just like the exercise we did during the Adobe Connect lesson,

This was the seed for our project, to live in the third space and interact with others and have a performance by doing things that is only possible in third space like joining body parts and point at things what seemed to be on the same space when its actually not in the first space.

In our discussion before the final idea, we had many different good ideas that was inspired from music videos like this:

In the end, we only used minimal passing of object (our face) in Telepathic Stroll and we grew our idea from a Magic trick kind of performance to an artistic kind of performance.

I feel really weird to have many of my project in this semester being inspired by BOLD3RRR, not in a sense that the style or the appearance or even the presentation, but in the sense of the preparation and the extensively planning before the Live broadcast as I always liked good planning that leads to good execution which I am really inspired by BOLD3RRR in this, especially in using pre-recorded track and incorporate it into a live broadcast to make it blends into the live aspect of it. This time, instead of using pre-recorded tracks and image over the broadcast like in our drunken piece (which was also highly influenced by BOLD3RRR), we evolved from this idea to using a pre-recorded track in the background for us to sync all of the movement even when not being able to see/hear from each other.

In our multiple occasions of class assignment which we went Live on Facebook, we figured out many limitations of Broadcasting

- If you go Live, you can’t watch other’s Live unless you have another device to do so and if you can’t watch others live while broadcasting, two way communication is relatively impossible.

- even if we have another device to watch, there will be minimum of 7 seconds delay.

- If you co-Broadcast, you can see the others in Live, but if we are doing a performance and yet we discussed through the co-Broadcast, since we can see each other and the viewer could also see it and this is not the effect we want in our final project.

- Co-Broadcast will have the quality dropped.

- There will be possible lagging in the connection that will cause the “!” during live and skips in the recorded playback. This must be overcome by all means.

This is why, doing individual broadcast with careful planning and our Master Command system will overcome all of the limitation we faced and with the calibration before the final broadcast, most of the problem were solved.

our idea of Social Broadcasting

In Telepathic Stroll, we are trying to present the idea of social broadcasting in a way that is like the real world social – the collective social,

In our individual broadcast, we could not know what the other was doing nor feeling, yet when we are placed together in a location(both the physical and third space), we could interact with each other and do amazing thing that individual can’t do. In Telepathic Stroll, even when just doing our own task without knowing the status of each other, by doing these individual task collaboratively, We united as a group forming as a single unit, every member is as important as all of us and if any of us were removed, the whole structure will collapse into a Problematic Stroll, nothing more than just an individual broadcast

If that wasn’t Social, what is?

Team Telapathic Stroll: Signing out.

at the end of the scissor paper stone segment, we all synchronized to the same action of the paper and Bao and I were counting on 5, so we all were showing our palm.

at the end of the scissor paper stone segment, we all synchronized to the same action of the paper and Bao and I were counting on 5, so we all were showing our palm.

I really like this random shot before the Stroll.

I really like this random shot before the Stroll. After the stroll, when the sun finally rise.

After the stroll, when the sun finally rise. Luckily for me, right after we finished the broadcasting, it started to drizzle. And did I mentioned that we were supposed to do on Monday morning and we woke up at 5a.m. and it was raining so we postpone our stroll, it was really lucky for us that it wasn’t raining on Tuesday morning even when the weather forecast said would.

Luckily for me, right after we finished the broadcasting, it started to drizzle. And did I mentioned that we were supposed to do on Monday morning and we woke up at 5a.m. and it was raining so we postpone our stroll, it was really lucky for us that it wasn’t raining on Tuesday morning even when the weather forecast said would.

This is the segment where we pass the phone to the next grid, we hope to achieve an effect that feels like we are moving in a straight line instead of passing in circle, we will only know the final effect when we view it from the grid.

This is the segment where we pass the phone to the next grid, we hope to achieve an effect that feels like we are moving in a straight line instead of passing in circle, we will only know the final effect when we view it from the grid. and this, just one video alone wont have any effect, but we hope to achieve a panorama spin effect through this when the grid is out.

and this, just one video alone wont have any effect, but we hope to achieve a panorama spin effect through this when the grid is out.

“Live” me flying over to point at “third space” me

“Live” me flying over to point at “third space” me

The top right(pre-recorded) and bottom right(live) goes to the desktop at around the same time and you can see the changes that happened on my desktop within that few days.

The top right(pre-recorded) and bottom right(live) goes to the desktop at around the same time and you can see the changes that happened on my desktop within that few days. I was talking about how Korean manga is usually in a long strip format but the website which I read it(MangaFox) cut it into a page format.

I was talking about how Korean manga is usually in a long strip format but the website which I read it(MangaFox) cut it into a page format. Thank you!!! =D

Thank you!!! =D

– Playing charades through the work. From the video

– Playing charades through the work. From the video