Currently, the big idea is:

Playing around with Unreal:

Wanted to try out the water plugin with Unreal and also the idea of floating actors

However at this point, everything felt slightly flat, and i wanted helium balloon-float not water-float

Also tried to connect Unreal w Touchdesigner via OSC potentially for the MicInput

Space

While building my space, I wanted to just see what I could with the free packages in the marketplace.

The following are the ones I used:

- (mainly for the rocks) https://www.unrealengine.com/marketplace/en-US/product/soul-cave

- (pretty fireflies) https://www.unrealengine.com/marketplace/en-US/item/91e62feae7104ce6a2c471454cc4b683

- (other type of rocks) https://www.unrealengine.com/marketplace/en-US/product/paragon-agora-and-monolith-environment

- (fun torches etc) https://www.unrealengine.com/marketplace/en-US/product/a5b6a73fea5340bda9b8ac33d877c9e2

- https://www.unrealengine.com/marketplace/en-US/product/9efde82ef29746fcbb2cb0e45e714f43

And this is what I created:

I retained the water element, but wanted to build on it. During the week of building, I wanted to focus on lighting. Maybe perhaps… because of this paragraph from a book I was reading (Steven Scott: Luminous Icons):

Obviously I am no Steven Scott, but I really like the idea of experiencing “something spiritual”, and also because it’s inline with the ‘zen’ idea of my project. While my project has no direct correlation with “proportion”, I still wanted to try to incorporate this idea with building lights of the ‘correct’ colours(murky blue-ish) and manifestations (light shaft, fireflies).

Projection

You would think just trying to get it up on the screen would be easy, just got to make the aspect ratio the same as 3 projectors.. thats what I tot too…

Anyway summary:

Spout- doesn’t work with 4.26, latest only 4.25

Syphon- Unreal doesnt support

Lightact 3.7- which doesnt spout, cant download on mac nor the school’s PC because it was considered unsafe due to the little amount of downloads

BUT I did try Ndisplay, which is an integrated function in Unreal

I could get it up on my monitor

Though I couldnt get it up on the projectors, but i think it could be due to my config files, i need perhaps another week or so on this

Interface

With the interface, this was the main idea:

Plan 1 would have made everything seamless because it is all integrated into Unreal. But ofc

So I am back with Plan 2, via OCS.

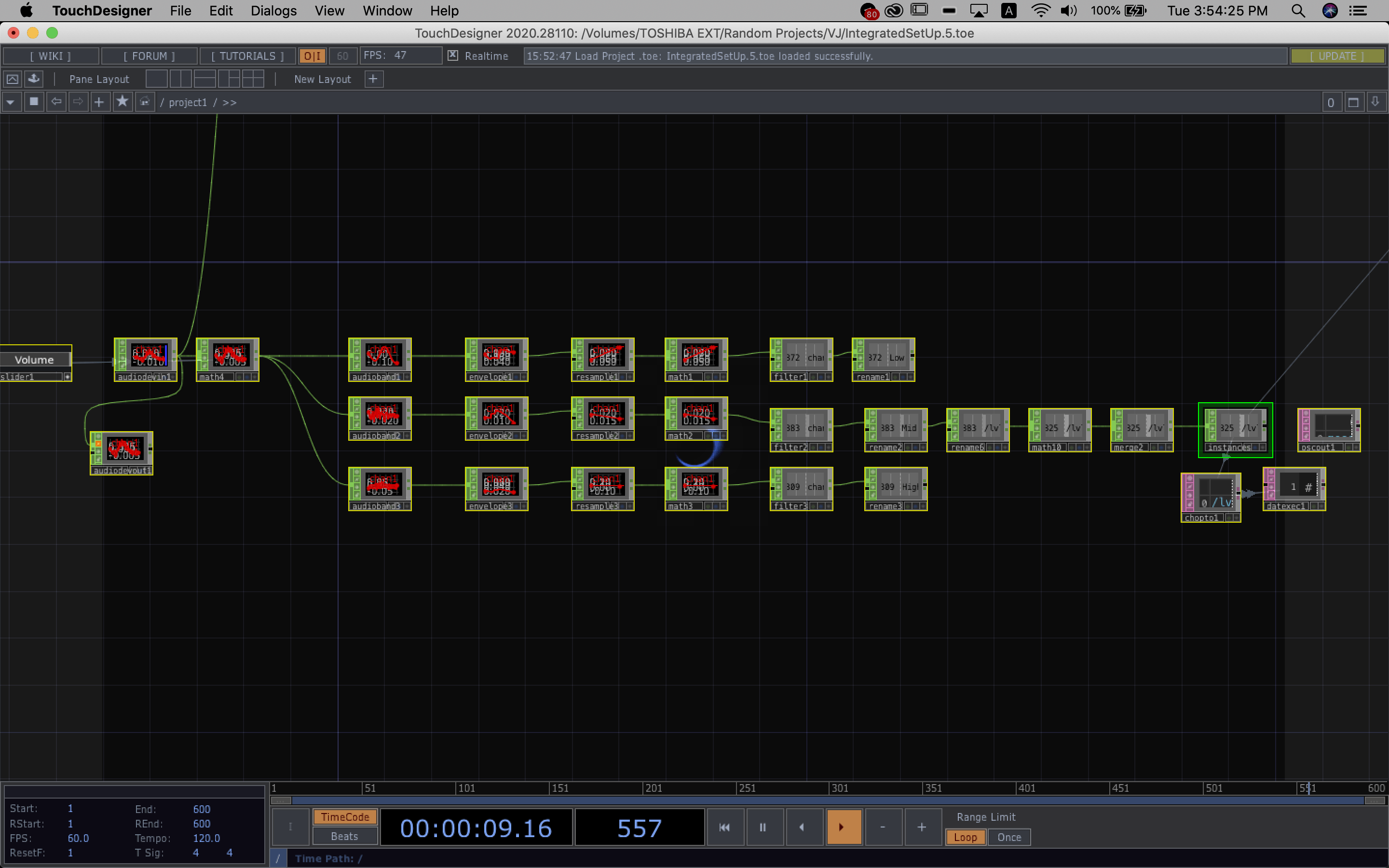

On touchdesigner’s end:

I have basically managed to split the audio that comes in via the mic into low, mid and high frequencies. For now, im only sending the mid frequency values over to Unreal because it represents the ‘voice’ values best. But, I had to clamp the values because of the white noise present. I might potentially use the high and low values for something else, such as colours? We’ll see.

For now, one of the biggest issues I feel is the very obvious latency from Touchdesigner to Unreal.

But moving forward first, the next step would be to try getting an organic shape and also spawning the geometry only when a voice is detected.

Since I wanted the geometry to ‘grow’ in an organic of way, I realised the best way to go around it would be to create a material that changes according to its parameters rather than making an organic static mesh because when it gets manipulated. by the values it’s literally just going to scale up or down.

This is how I made the material:

Using noise to affect world displacement was mainly the node to get the geometry ~shifty~. But I think as you can tell from the video, the Latency and the glitch is really quite an issue. And once again moving on, trying to spawn the actor in Unreal when voice is detected.

For that I am using Button state in Touchdesigner to do so. When a frequency hits a threshold, which assuming comes from the voice, the button will be activated. When Button is on click, ‘1’, the geometry should form and grow with the voice, but when the button is not on click, ‘0’, the geometry should stop growing.

Currently this is the button I have but it’s consistently still flickering between ‘on’ and ‘off’ so, I am starting to think maybe I should change to ‘When there is a detection of change in frequency instead, button clicks’.

To be continued…

Stage Creation/Platform!!!

Step 1: Idea

Step 2: Get materials Instead of getting a foldable stage, getting crates were just much cheaper and makes more sense since they already had slits. There is space to insert the bulbs.

Instead of getting a foldable stage, getting crates were just much cheaper and makes more sense since they already had slits. There is space to insert the bulbs.

Next was trying to get the electric circuit out and the placement of the bulbs. Mainly it was inserting 4 bulbs (2 at the edges, 2 in the middle). Initially I was thinking of doing 3, but the middle part of the crate was blocked with wood, instead of trying to drill into the wood, (which would have been dangerous since it might not be able to support the weight it was originally intended for) I decided to just have 2 in the middle.

Next was trying to get the electric circuit out and the placement of the bulbs. Mainly it was inserting 4 bulbs (2 at the edges, 2 in the middle). Initially I was thinking of doing 3, but the middle part of the crate was blocked with wood, instead of trying to drill into the wood, (which would have been dangerous since it might not be able to support the weight it was originally intended for) I decided to just have 2 in the middle.

The parallel circuit mainly consisted of the 4 bulbs, 4 starters, 4 ballast. Additional 12 clips to hold the bulbs in place.

The parallel circuit mainly consisted of the 4 bulbs, 4 starters, 4 ballast. Additional 12 clips to hold the bulbs in place.

Step 3: Installation

left the paper sleeve on because 4 bulbs was brighter than I expected

left the paper sleeve on because 4 bulbs was brighter than I expected

Underside view of the crate

Underside view of the crate

, Since there was too much light penetration through the slits, I think I will cover it up with a cloth to achieve a the more diffused look.

, Since there was too much light penetration through the slits, I think I will cover it up with a cloth to achieve a the more diffused look.

Left to do: Attach the 2nd crate below to make this platform higher, sand the crate for safety and add attach the cloth

Interface

Updates from the previous stage: I have added such that the spawned object is moves randomly within a bounding box, and experimented with the call to spawn the object.

What I realise is that we don’t speak in one breathe, so because we break our sentences up as we speak, this would cause many many objects to be spawned because of the pauses in between.

Instead, I think another more efficient way of doing this would be to have a button participants can press while they are speaking.

Right now, still not too happy with the way it moves, I wanted more of a zero-grativity floating kinda movement but its like doing the zoomies now.

Also, it’s not the way it spawns is also so ugly, it is just appearing at the target point, but I am looking at a more like blowing bubble like vibe.

This point, I was trying to decide how the users were going to hear the record audio (the muffled loud noise is me recording- not too sure what’s up with the recording), decided that the best way possible was to have the user go near the object. This way, specific audios can be played with specific spheres instead of just playing a random one, which might lead the user to hear the same audio twice.

So getting from this stage to the next consisted of:

- Setting up 2 views: one for the player who is going to listen to the audio, other for the player recording (will touch on more later when incorporating the VR portion)

- Having to spawn both the sphere and the audio into separate arrays and give them IDs, so that I can match the similar Index of the sphere to the similar Index of the audio

(in Level Blueprint)

- Getting component to float & hover away only when audio has finished recording

(in Actor Sphere Blueprint)

- Setting up the whole overlap shenanigan

- Somehow importing the audio from local drive during runtime (managed to find a plugin for this https://www.unrealengine.com/marketplace/en-US/product/runtime-audio-importer?sessionInvalidated=true)

(in Actor Sphere Blueprint)

- Also need to stop the already spawned audio to not record again when the same button is pressed, if not they (meaning audio texture) will ovrwrite despite already setting different name files (Using ‘Called’ boolean)

(in Actor Sphere Blueprint)

- Setting up the texture/fx to alert the player when they have made contact with the floating sphere

Made using particle system and + ‘Move to Nearest Distance Field Surface GPU’

Virtual Camera test:

At this point the virtual camera was way too laggy for anything. So initially I wanted to make it a mobile game but i would need to remove too many things to support the graphic feature of the mobile version, so I decided to go with VR instead.

The biggest challenges I faced at this stage were:

1. Making an asymmetrical multiplayer game- 1 VR 1 PC

2. Replication – to have the server and client seeing the same thing happening

3. Setting up a third-person VR view, because usually its always first-person

Final

Final Thoughts:

This project formed as it developed. Even till the last week, I was still deciding the set-up and the method of performing the interaction, whether it be virtual camera, or phone, or VR. Instead, what I knew I wanted was how the space was suppose to feel and the fundamental idea of breaking up the steps to having a simple conversation. With the vision of wanting others to find solace in the complexity of life, to understand that everyone out there is on their own journey, I wanted to set everyone back to the idea of ‘Talking’ and ‘Listening’ and ‘Seeing’. Technically, the whole project doesn’t have to be split up into these 3 “stations” and could potentially be just one entire game on one PC. However, I was determine to pull through with the setup in the dance room because its the concept that when times are tough, you need to split things up and intentionally take it one at a time. In the midst, to seek comfort in the realisation that each random passerby is living a life as vivid and complex as your own.

You must be logged in to post a comment.