Click here.

Final Project – Test Run

Hannah & Joan live broadcast

https://www.facebook.com/littleangel.hannah/videos/10159446580005425/

Cher See & Xin Feng live broadcast

https://www.facebook.com/sim.xinfeng/videos/10155367381841888/

Combine broadcast on OBS

https://www.facebook.com/goh.chersee/videos/10212224901431949/

Roles: Cher See (Police), Xin Feng (Police), Hannah (Thief), Joan (Thief)

Co-broadcasting reflection

One of the technical difficulties that we face while we were broadcasting was the lagginess of our stream. We kept on disconnecting with each other and the constant need to stay connected to the internet had therefore limiting our hiding spot. The problem was that our stream requires a certain level of responsiveness. The time delay between all of the live feed might cause confusion and disarray in our communications with one another.

The audio quality was surprisingly loud and clear and the vocal communication between the co-broadcasters was smooth. At the beginning of the broadcast, we were all in the same room and the echo of audio transferred between the five devices was very interesting. The close proximity of the devices also created some audio feedback. At around 00:13 & 24:00, we experimented with this echo and feedback effect.

As the race started, the thieves headed off to various hiding spots and were able to converse easily through the broadcast. We also noted that the clear audio recording allowed the policemen to eavesdrop easily on the thieves’ conversation and deduce their locations. The comment section for Joan and Hannah live feed was smooth and did not run into any problems. Cher See(police) have posted some riddles for the Thief team to guess.

Future Improvements

For the thieves, we could improve on our camera angle and reveal more of our locations so that it would create interesting footages. It would also allow the policemen to have a better idea and better regulate the race.

As for the riddles given by the policeman in the headquarters, if the thieves are unable to guess it another riddle will be given. If the latter are able to solve it, the policemen will reveal one of the numbers of the padlock.Maybe the police could change position with each other so that the police team would not tire themselves.

We could also add in special effects, sound effects and make our combine stream more aesthetically pleasing.

Research Critique – Second Front

Second Front is an online performance art collective in the virtual avatar-based VR world called Second Life. The seven-member group consists of Gazira Babeli (Italy), Yael Gilks (London), Bibbe Hansen (New York), Doug Jarvis (Victoria), Scott Kildall (San Francisco), Patrick Lichty (Chicago) and Liz Solo (St. Johns). They have performed live, remotely from different locations, while being screened in various cities, galleries and museums.

Why Second Life?

“In many ways, Second Life operates as a

fantastical dream state. We can fly, teleport and pick up houses and

cars.” – Great Escape

VR may not be new to the gaming world, but it is relatively fresh and an unexplored territory in terms of making art, and in this case, performance art. Second front sees Second Life as a venue for creative artistic expression. Most audiences are still foreign to the idea of a performance art in the virtual world, and are more likely to be drawn by the curiosity of it.

Second Life as a medium, is fully customisable and has no boundaries unlike the real world. It allows endless possibilities for creativity, opening up room for absurd artistic expressions that could defy the laws of nature of the real world.

It allows for crazy, large-scale works that would have been extremely costly to reproduce or not normally achievable in real life. For example, in the Grand Theft Avatar, large venues and props like the H-bombs, huge banks, helicopters and flying moneybags would look distracting and unconvincing in the physical world, and the same effect would not have been achieved. Even with low quality graphics, these bizarre effects can be seamlessly produced in virtual reality, without constantly reminding audiences from the fact that it is not real.

Second Life vs Real Life

In the interview, it was mentioned that there was a ‘virtual leakage’ between the virtual and reality, blurring the line between the two. In a way, there is a ‘spill-over’, where the virtual world becomes more real and reality becomes a little more virtual, through control and extension of the self. I thought it was interesting how the artists perceived their virtual selves in Second Life in relation to their real selves.

Extension of self:

The physical and the virtual identity can be perceived as two separate entities, where the avatar acts as a puppet controlled by the real self. The avatar, however, has freedom and ability to do things beyond the limits of the physical world. Therefore, the avatar is a virtual extension of the physical self, through which artists can fully express themselves through unconventional means.

Embedding within self:

I think that the avatar Great Escape occupies a strange nook in my subconscious. When I go to sleep at night, images of the other Second Front members often fill my head. So for me, my avatar is embedded in my psyche, rather than an

extension of myself. – Great Escape

The two identities can also be seen as different versions of the same person. As the physical body lives in the real world, the virtual one lives in its subconsciousness. The artist can switch between the two identities, however different or similar they may be.

Conclusion

Second Front creates unique, unconventional art forms through Second Life, and surprises viewers with their crazy antics that would otherwise be unachievable through traditional mediums. They are opening paths towards a new kind of art in the technologically advanced world today, and is definitely worth anticipating.

References

http://rhizome.org/community/38893/

http://www.secondfront.org/

http://www.voyd.com/secondfront.html

https://www.youtube.com/watch?time_continue=285&v=RoHctMuI_HU

Co-broadcasting Experience

https://www.facebook.com/littleangel.hannah/videos/10159394268150425/

The co-broadcasting experience was great, as the split screen function was easily accessible and the connection was also smoother, as compared to OBS.

It was convenient, as the main broadcaster could see both sides simultaneously, possibly allowing more room for coordination during broadcasts. The person invited into the broadcast could also hear the main broadcaster, allowing for smoother responses and interaction. For my broadcast with Hannah, we were walking with quite a distance apart, but within the same area (B1 carpark). For most of the video, we managed to converse through the broadcast without much difficulty, as the sound quality was pretty decent.

We did lose connection once or twice (as usual, carpark wifi is bad), but quickly reconnected without too much lag. What I found confusing was that the resulting video on Facebook was more choppy than when we broadcasting through our phones.

Overall, it was a pleasant experience, and a new way for us to broadcast for our final project!

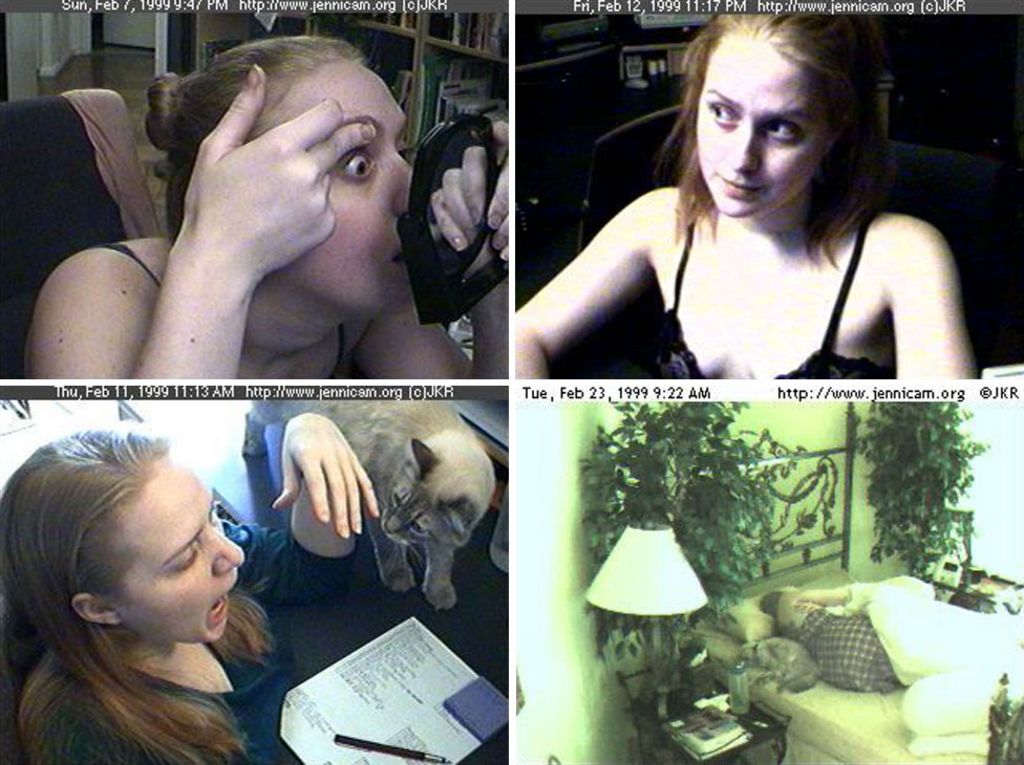

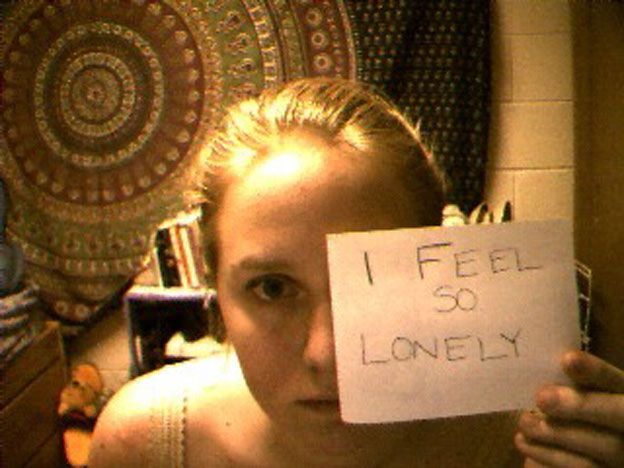

Research Critique – Jennicam

In 1996, Jennifer Ringley was the first person to broadcast her life online. It started when she was in college, where anyone with internet access could watch her through her photos that were updated every three minutes. Months later, her experiment spiraled into a global sensation, attracting up to four million paid views per day.

She was her own reality TV, in the sense that everything was uncensored and unedited, or as she would describe as a “virtual human zoo”. Just like how visitors pay to watch animals go about with their daily life, Jennicam viewers pay to watch her go about with her daily life.

So, what makes Jennicam interesting?

“One of my favourite emails I got last year, I got a message from a guy, saying he was in college, it was a Friday night and all of his friends were out. He felt like a loser because he was sitting at home.

But he turned into Jennicam, and I was there doing my laundry. So he said it made him feel better because I’m popular.”

Firstly, it showed the most humane side of things, not just the best side. In a way, Jennicam was comforting for those who felt lonely, as it showed the most authentic, humane side of a person who was supposedly popular. It makes one feel relatable, and perhaps less of a loser. Most people on the internet (then and now) show only the best sides of themselves – for example, a gamer would stream his best game, a stripper would stream her most provocative side, and a makeup artist would stream herself looking flawless. Jennicam, on the other hand, lived her life in front of the camera seven years, truly capturing the ‘real-ness’ of her ordinary life.

Secondly, viewers were entranced by the story of Jenni’s life, with the occasional glimpses of nudity and private, personal moments. Despite being mundane everyday life, viewers can’t watch anything like that in the physical world – not without being close to the person or getting arrested. At the time when Internet was relatively new, Jennicam was a complete new form of entertainment and provided people with a brand new experience. People were willing to pay and spend time watching her, out of curiosity or excitement from the small chance of catching her doing something “happening” at times.

Thirdly, this experiment was not something that everyone could do, despite seeming innocent at the first glance. On David Letterman’s show, Jenni mentioned that while she is completely comfortable with doing everything on camera, she understands that everyone has their own boundaries. Experiments like Jennicam was an example of data over-sharing. She may be comfortable with sharing every detail of herself with the world, but she will never know who is watching and what their (possibly malicious) intentions could be. After all, her whereabouts and activities were just one click away. Even 14 years after she has shut down and deleted her website, her images and data are still easily accessible on other sites and she can never truly remove herself from the Internet.

Conclusion

Overall, Jennifer Ringley’s personal experiment was indeed remarkable journey that contributed to the mass personal data sharing we have today. It evolved from an innocent sharing amongst friends, to a globalized sensation, sparked several controversies, and now – offline. Her experiment has shed light on technological advancement and massive reach of data sharing (from one to many) over the past two decades. Now that it has completely shut down, it also raises questions about over-sharing of data, internet security and online expression in the digital age today.

References

http://www.bbc.com/news/magazine-37681006

http://www.news.com.au/technology/online/social/patient-zero-of-the-selfie-age-why-jennicam-abandoned-her-digital-life/news-story/539cd1b26016fcee1a51cfca3895a7b5

https://www.youtube.com/watch?time_continue=279&v=0AmIntaD5VE

HUMAN+

The trip to the ArtScience Museum was definitely an enriching one as it showed me a glimpse of what our future may possibly become. I felt like I had stepped into a different world, with exhibits ranging from being hyper-realistic to being complex or simply quirky. I enjoyed interacting with most of the works, as they helped me understand a little better about where our rapid development in technology is heading towards. The nature of works in this exhibition was very different from what we are normally used to, or worked on in school – in this case, these work are directly integrated with the artists (or humans) rather than separated. It teaches us about how technology can not only benefit us the conventional way, but integrated within our biological selves as an extension of our abilities and senses.

Techno-biological performance artist Stelarc

Performance at Daedalus, Fringe World, Chrissie Parrot Arts, Perth

Sound by Petros Vouris

Assisted by Tim Jewell, Steve Berrick, Alwyn Nixon-Llyod, Steven Aaron Hugues, Rodney Parsons, Paul Caporn

StickMan features Stelarc, strapped onto a custom-engineered robotic exoskeleton that choreographed his movements remotely through a computer algorithm. The system could generating up to 64 possible combinations of movement, with the help of accelerometers and gyroscopes attached throughout the robot. As it moves continuously, his body movements are tracked simultaneously. Fluctuating waves of sound are also produced as the spine and limbs shifts.

Thoughts

Stelarc’s works were shown near the beginning of the exhibition, and it certainly captured my attention despite being only in video form. I was first captivated by the uninterrupted, seamless movement of the exoskeleton that Stelarc was strapped on. Its swift movements, twists and turns reminded me of a sci-fi action movie, like Transformers or Pacific Rim. As I continued watching the video, many thoughts came to my mind. How is he so calm? Isn’t it uncomfortable? Doesn’t he feel trapped?

Upon reading the artist statement and description, I had a better understanding of the reasons behind Stelarc’s robotic creations. His works blurred in lines between the human body and robotics, revolving around the concept of the human body becoming obsolete. Unlike many sci-fi movies where humans control the robots, Stelarc lets his robots take control, and in this case, his body movements. For five hours, he was robbed of his ability to move freely, and was instead controlled by his machine which was receiving unknown inputs from a computer algorithm.

Our heavy reliance on technology have yet to reach the point where robotic implants are considered a norm. Stelarc’s concept are hence very unique, as most people are not used to these kinds of robotic experiences, for its dangers include technological failure, claustrophobia, pain or bodily harm. He looks beyond the physical discomfort of the body and focuses instead on the extension of the human body, enhancing basic human abilities through the integration of electronics. One of his notable works (see below), Ear on Arm, was a surgical modification that remotely shares what he hears in real-time with the rest of the world.

Personally, I feel that the world of electronic implants and smart robots is within the path of human evolution in the future. While some ethical issues and complexities may come into play, these explorations and creations will nevertheless arouse curiosity and interest in people, and perhaps help our kind.

References

http://steve.berrick.net/stickman

http://www.marinabaysands.com/museum/human-plus/augmented-abilities.html#rwT6uWzZVcuezOti.97

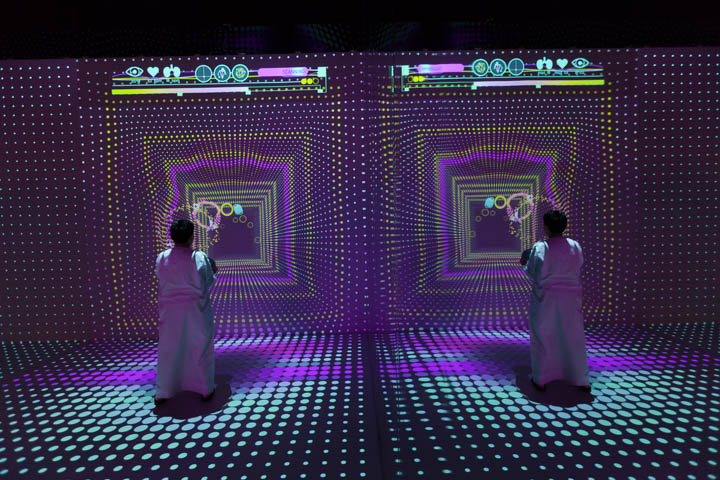

Device of the Week #3 – FITZANIA

Fitzania is preventative medical checkup disguised as an immersive game. Its aim is to encourage citizens to return regularly for health check-ups and fitness tests, by making healthcare a more personalized, stress-free and enjoyable part of everyday life. It allows viewers to have fun and immerse themselves comfortably in a game world, while analysis and diagnosis happens naturally at the same time. This setup consists of a spacious open room with digital walls and a single orb for players to carry during the game.

HOW IT WORKS

Players can lift the orb from the pedestal as they verify their personal information and calibrate their body movements with the system. Upon successful log-in, the orb vibrates, signaling the start of the game.

Fitzania makes use of a unique tracking system to map player data in the virtual space. Each orb is also treated with halo retro-reflective coatings, allowing easy detection by the sensors and plotting of the player’s precise location. As the player moves and positions the orb in the physical game space, several sensors around the room detects and analyze the bio-metric signals collected. The game provides on the spot diagnosis and updates the player’s personal fitness profile, allowing the player to receive his or her results right after.

WHERE IS IT?

Museum of Future Government Services, UAE, Dubai, 2015

THOUGHTS

This is certainly a refreshing approach towards healthcare, with easy calibration and little props. It pushes the boundaries of conventional games by incorporating aspects of health diagnosis with fun.

However, this setup could be a rather pricey investment – could only be applicable to youths, as this activity could be physically draining for kids or the elderly. Furthermore, the scope of the fitness diagnosis is perhaps limited or too general across all patients, and hence unable to determine specific illnesses or health problems simply through a game.

Perhaps this game can be installed in large hospital buildings or gyms as a form of entertainment, where visitors or patients can interact with. It can be a fun alternative way for people to understand the importance of health check-ups and have a general idea of their physical well-being, instead of being an official diagnosis.

References

http://scatter.nyc/fitzania/

https://www.designboom.com/technology/specular-projects-fiztania-interactive-installation-08-07-2015/

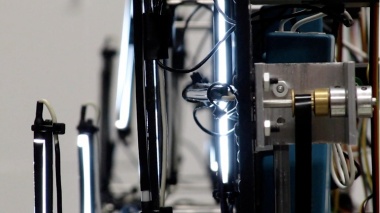

Device of the Week #4 – Four Letter Words

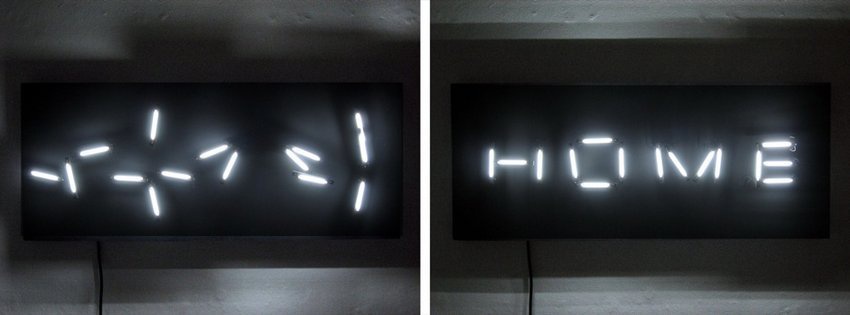

The Four Letter Words is an installation by interactive artist Rob Seward, consisting of a robotic collection of fluorescent lights. The setup is separated into four units, each capable of displaying any of the 26 alphabets by shifting the placement of lights. Hence, any four-lettered word can be displayed at one time.

The word sequence displayed is continuously generated by an algorithm derived from a linguistic database developed by the University of South Florida. The meaning of each word, the letter sequencing, rhyming and association are all taken into account with each generated word. For example, the following word will always have only one difference in alphabets compared to the previous word: RATE – RAKE – LAKE – WAKE – WAGE – WARE – DARE – DARN

What is needed

4 Arduinos

20 servos

8 step motors

24 3.9 inch cold cathode lights

How it works

The positions of the lights are stored in an XML file, while a mac mini runs a Processing code comprising of data alignment to the four Arduino boards. The application reads the list of words generated, and send the data over. The position and angle of each light bulb responds to any of the alphabets from A-Z, moving in a fairly quick manner. Rob Seward mentioned that there were certain alphabet transitions that the device could not carry out, due to the arrangement of the light bulbs. For example, if ‘S’ switches to ‘D’, two of the bulbs would collide. The Processing code ensures that none of these transitions would happen, to avoid collisions and destruction.

Advantage

As a functional piece, I feel that this device would add an exciting touch to existing neon signs, in place of restaurant or bar signs, or other places of entertainment. It could display names, or short meaning words pertaining to its surroundings, or simply move around forming an interesting design.

It could also be installed as an art piece in museums or hotel lobbies where visitors can sit and admire.

Disadvantage

The length of each word displayed is restricted to the number of Arduino setups, and only appears one at a time. While point of the work revolves around word association and rhyming, the same device would be unable to display other types of textual content, unless produced in a large-scale setting.

References

https://www.psfk.com/2010/03/video-robotic-installation-generates-phrases-with-physical-type.html

http://arduinoarts.com/2014/05/9-amazing-projects-where-arduino-art-meet/