SOUND OF STONES

Generative Study:

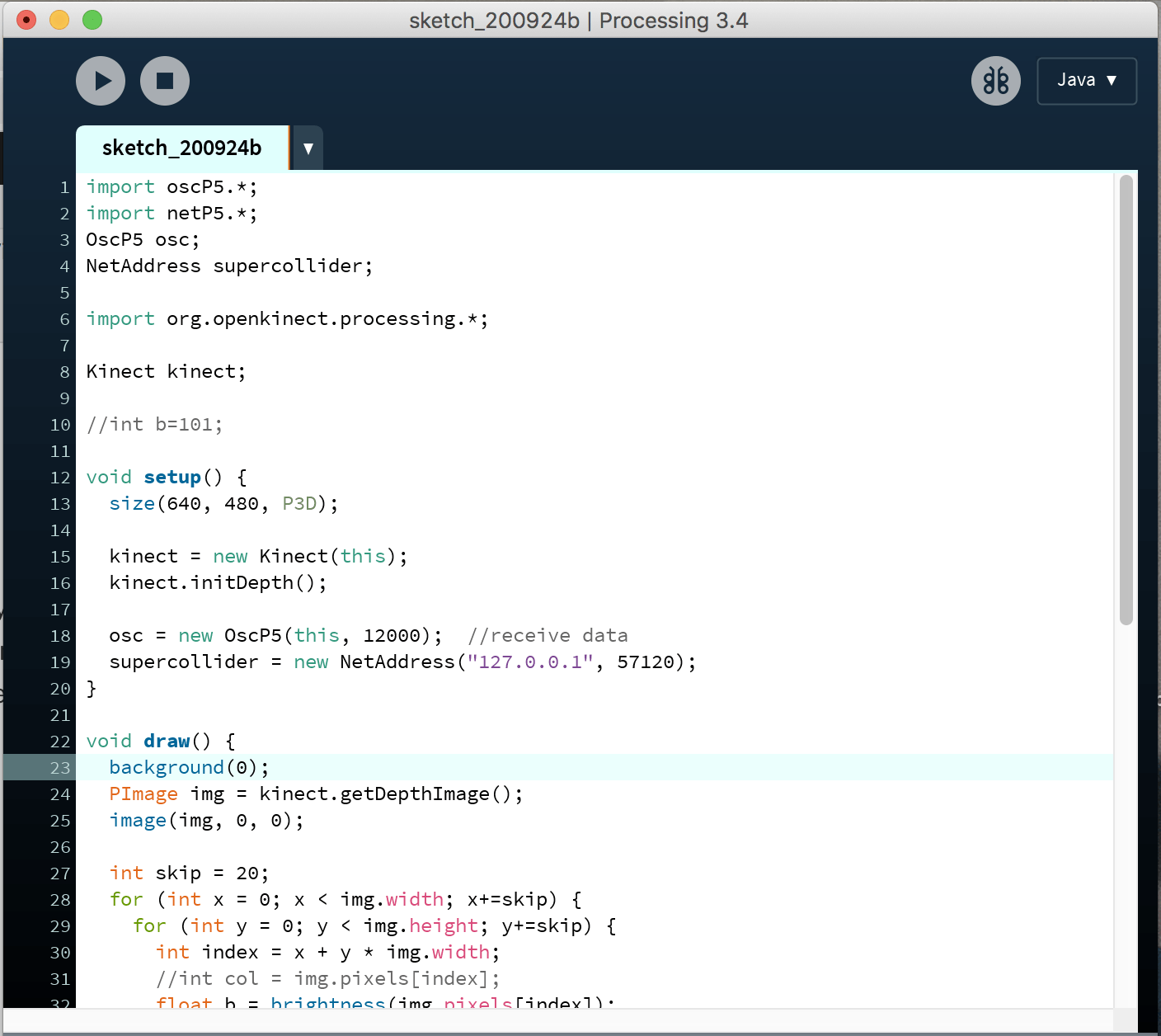

Real-time sound generation using depth data from kinect

Over Week 7, I experimented with SuperCollider, a platform for real-time audio synthesis and algorithmic composition, which supports live coding, visualisation, external connections to software/hardware, etc. On the real-time audio server, I wanted to experiment with unit generators (UGens) for sound analysis, synthesis and processing to study the components of sound through the programming language and visually (Wavetable). The physical modelling functions (plot, frequency analyser, stethoscope, s.meter, etc) would allow me to explore the visual components of sound.

Goals

- Connecting Kinect to processing to obtain visual data (x, y, z/b)

- Create and experiment with virtual synthesizers (SynthDef function) in SuperCollider + visualisation

- Connect Processing and SuperCollider, send data from Kinect to generate sound (using Open Sound Control OSC)

Initially, I was going to work with Python to generate data flow into SuperCollider but Processing would be more suitable for smaller sets of data.

SuperCollider

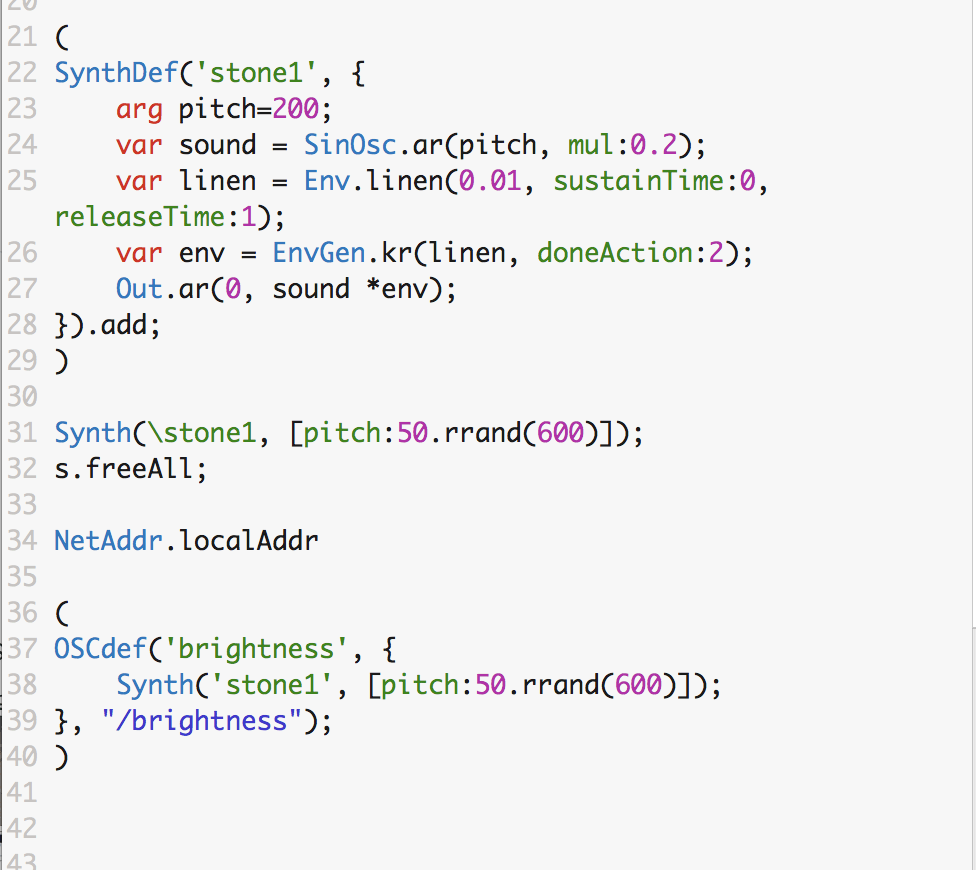

SynthDef() is an object which allows us to create a function (design of a sound), and the sound generation has to been run using the Synth.new() or .play line eg. x = Synth.new(\pulseTest);. This allows us to create different types of sound in the SynthDef(/name) function and the server allows us to play and combine the different sounds live (live-coding).

Interesting functions:

Within SuperCollider, there are interesting variables that can be used to generate sound other than the .play function. This would be relevant to my project where I would like to generate the sounds or the design of the sound using external data from the kinect. The functions I worked with include MouseX/Y (where the sound varies based on the position of the mouse), Wavetable synthesis and Wave Shaper (where the input signals are shaped using wave functions) and Open Sound Control (OSCFunc which allows SuperCollider to receive data from external NetAddr.).

MouseX

Multi-Wave Shaper (Wavetable Synthesis)

SuperCollider Tutorials (really amazing ones) by Eli Fieldsteel

Link: https://youtu.be/yRzsOOiJ_p4

https://funprogramming.org/138-Processing-talks-to-SuperCollider-via-OSC.html

Processing + SuperCollider

My previous experimentation involves obtaining depth data (x, y, z/b) from the kinect and processing the data into three-dimensional greyscale visuals on Processing. The depth value z is used to generate a brightness value, b, from white to black (0 to 255) which is reflected in each square of every 20 pixels (skip value). To experiment with using real-time data to generate sound, I thought the brightness data, b, generated in the processing sketch would make a good data point.

Connecting Processing to SuperCollider

Using the OscP5 library in Processing, we input data into a NetAdress that will be redirected into SuperCollider.

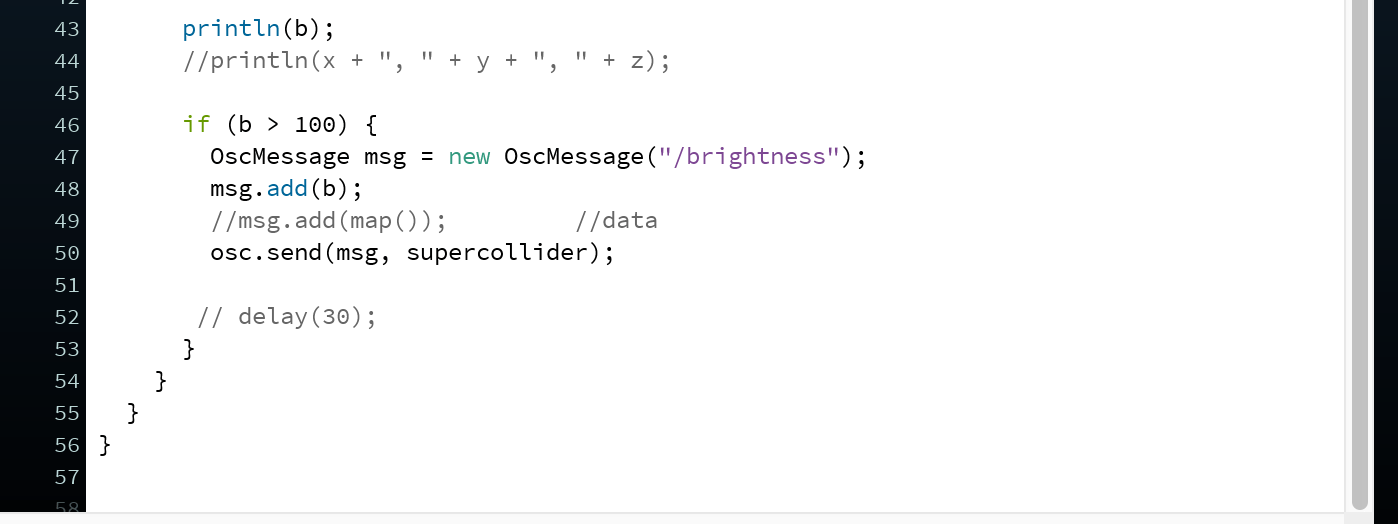

Using the brightness value, when the b value is more than 100, a message ‘/brightness’ is sent to OSC SuperCollider.

When the OSCdef() function is running in SuperCollider, and you receive ‘-> OSCdef(brightness, /brightness, nil, nil, nil)’ in the Post window, it means that it is open to receiving data from Processing. After running the processing sketch, whenever the message ‘/brightness’ is received, the Synth(‘stone1’) will be played.

Generative Sketch – Connecting depth data with sound

For the purpose of the generative sketch, I am working with using data as a trigger for the sound that has been pre-determined in the SynthDef function. Different SynthDef functions can be coded for different sounds. So far, the interaction between kinect and the sound generation is time-based, where movement generates the beats of a single sound. For larger range of sounds specific to the captured visual data, and thus textures, I would have to consider using the received data values within the design of each sound synthesizer.

Improvements

I see the generative sketch as a means to the end, so by no means does it serve as a final iteration. It was a good experiment for me to explore the SuperCollider platform which is new to me, and I was able to understand the workings of audio a little better. I would have to work more on the specifics of the sound design, playing with its component, making it more specific to the material.

Further direction and Application

Further experiments would be to use more data values (x, y, z/b) beyond the sound generation (Synth();) but be used in the design of the sound (Synthdef();). A possible development is to use Wave Shaper function to generate sounds specific to the Wavetable generated using functions that are manipulated or transformed using the real-time data from kinect.

Generative Sound and Soundscape Design

I would like to use the pure depth data of three-dimensional forms to map the individual soundscape (synthesizer) of each sound, so that the sound generated would be specific to the material. This relates to my concept of translating the materiality into a sound, where the textures of the stone correspond to a certain sound. So, if the stone is unmoved under the camera, an unchanging loop of a specific sound will be generated. When different stones are placed under the camera, the sounds would be layered to create a composition.

In terms of instrumentation and interaction, I can also use time-based data (motion, distance, etc) to change different aspects of sound (frequency, amplitude, rhythm, etc). The soundscape would then change when the stones are moved.

Steps for Generative Study:

I have yet to establish a threshold on the kinect to isolate smaller objects and get more data specific to the visual textures of materials. I might have to explore more 3D scanning programs that would allow me to extract information specific to three-dimensionality.

My next step would be to connect more data points from processing to Supercollider and try to create more specific arguments in SynthDef(). After which, I would connect my Pointcloud sketch to Supercollider where I might be able create more detailed sound generation specific to 3D space.

Link to Performance and Interaction:

Proposal for Performance and Interaction class:

https://drive.google.com/file/d/1U5J0XajPlCrGfuhPQEI6J1zQDqRu2tJL/view?usp=sharing

Audio Set up for Mac (SuperCollider):

Audio MIDI Setup

Class notes recap and additional thoughts

You can have a camera take a snapshot of a stone, than convert just the colors of its surface into different properties for sound generation. If you want to convert a visual 2D scan into a 3D “landscape” and then into sound, you can line-scan the image captured by video/photo camera, convert each scanned line through its RGB data into a 2D curve and feed these 2D curves sequentially to build a 3D wavetable (with or without the interpolation between each curve, depending on the scanning resolution), and finally to play the wavetable.

Another important point is on how to overcome making noise and generate pleasing sonic output by scanning. There are probably many techniques for this “harmonization” or “musification” of generated sound. I believe there is a way to achieve this by manipulating audio samples instead of sound synthesis.

Regarding these two points, I have no formal music education so I am wildly guessing here and probably there are much more elegant solutions. So you need to do your research to find and implement them within your system.

You can also consult with professor Ross – if you want to do that, please let me know so I can ask him to help you out. You can additionally consult with Philippe about that at his guest talk.

Two quick links on wavetables, the first one for Ableton Live 10, second one for Arturia Pigments:

https://youtu.be/c5Sn0Kibu2w

https://youtu.be/_w1h4vknfow