Dorin, Alan, Jonathan McCabe, Jon McCormack, Gordon Monro, and Mitchell Whitelaw. “A framework for understanding generative art.” Digital Creativity 23, no. 3-4 (2012): 239-259.

Aim of the Paper

In the process of analysing and categorising generative artworks, the critical structures of traditional art do not seem to be applicable to “process based works”. The authors of the paper devised a new framework to deconstruct and classify generative systems by their components and their characteristics. By breaking down the generative processes into defining components – ‘entities (initialisation, termination)’, ‘processes’, ‘environmental interaction’ and ‘sensory outcomes’ – we are able to critically characterise and compare generative artworks which underlying generative processes, as compared to outcomes, hold points of similarity.

The paper first looked at different attempts at and previous approaches of classifying generative art. By highlighting the ‘process taxonomies’ of different disciplines which adopt the “perspective of processes”, the authors used a ‘reductive approach’ to direct their own framework for the field of generative art in particular. Generative perspectives and paradigms began to emerge in the various seemingly unrelated disciplines, such as biology, kinetic art and time-based arts and computer science, adopting algorithmic processes or parametric strategies to generate actions or outcomes. Previous studies explored specific criterions of emerging generative systems by “employing a hierarchy, … simultaneously facilitate high-level and low-level descriptions, thereby allowing for recognition of abstract similarities and differentiation between a variety of specific patterns” (p. 6, para.4). In developing the critical framework for generative art, the authors took into consideration of the “natural ontology” of the work, selecting a level of description that is appropriate for the nature. Adopting “natural language descriptions and definitions”, the framework aims to serve as a way to systematically organise and describe a range of creative works based on their generativity.

Characteristics of ‘Generative Art System’

Generative art systems can be broken down into four

(seemingly) chronological components – Entities, Processes, Environmental Interaction and Sensory Outcomes. As generative art are not characterised by the mediums of their outcomes, the structures of comparison lie in the approach and construction of the system.

All generative systems contain independent actors ‘Entities’ whose behaviour is mostly dependent on the mechanism of change ‘Processes’ designed by the artist. The behaviours of the entities, in digital or physical forms, may be autonomous to a certain extent decided by the artist and determined by their own properties. For example, Sandbox (2009) by artist couple Erwin Driessens and Maria Verstappen, is a diorama of a terrain of sand is continuously manipulating by a software system that controls the wind. The paper highlight how each grain of sand can be considered the primary entities in this generative system and how the system behaves as a whole is dependent on the physical properties of the material itself. The choice of entity would have an effect on the system, such as in this particular work where the properties of sand (position, velocity, mass and friction) would have an effect on the behaviour of the system. I think the nature of the chosen entities of a system is important factor, especially when it comes to generative artworks that use physical materials.

The entities and algorithms of change upon them also exist within a “wider environment from which they may draw information or input upon which to act” (p. 10, para. 3). The information flow between the generation processes and their operating environment can be classified as ‘Environmental Interaction’, where incoming information from external factors (human interaction or artist manipulation) can set or change the parameters during execution which leads to different sets of outcomes. These interactions can be characterised by “their frequency (how often they occur), range (the range of possible interactions or amount of information conveyed) and significance (the impact of the information acquired from the interaction of the generative system)”. The framework also classifies interactions as “higher-order” when it involves the artist or designer in the work, where he can manipulate the results of the system through the intermediate generative process or adjusting the parameters or the system itself in real-time, “based on ongoing observation and evaluation of its outputs”. The higher order interactions are made based on feedback of the generated results, which hold similarities to machine learning techniques or self-informing systems. This process results in changes to its entities, interactions and outcomes and can be characterised as “filtering”. This high order interaction is prevalent in the activity of live-coding, relevant to audio generation softwares which supports live coding such as SuperCollider, Sonic Pi, etc, where performance/ outcome tweaking is the main creative input.

The last component of generative art systems is the ‘Sensory outcomes’ and they can be evaluated based on “their relationship to perception, process and entities.” The generated outcomes could be perceived sensorially or interpreted cognitively as they are produced in different static or time-based forms (visual, sonic, musical, literary, sculptural etc). When the outcomes seems unclassifiable, they can be made sense of through a process of mapping where the artist decides on how the entities and processes of the system can be transformed into “perceptible outcomes”. “A natural mapping is one where the structure of entities, process and outcome are closely aligned.”

Case studies of generative artworks

The Great Learning, Paragraph 7 – Cornelius Cardew (1971)

Paragraph 7 is a self-organising choral work performed using a written “score” of instructions. The “agent-based, distributed model of self-organisation” produces musically varying outcomes within the same recognisable system, while it is dependent on human entities and there is room for interpretation/ error in the instructions, similarities with Reynolds’ flocking system can be observed.

Tree Drawings – Tim Knowles (2005)

Using natural phenomena and materials of ‘nature’, the movement of wind-blown branches to create drawings on canvas. The found process of natural wind to be used as the generator of movement of the entities highlights the point of the effect of physical properties of chosen materials and of the environment. “The resilience of the timber, the weight and other physical properties of the branch have significant effect on the drawings produced. Different species of tree produce visually discernible drawings.”

The element of surprise is included in the work, where the system is highly autonomous, where the artist involvement includes the choice of location and trees as well as the duration. It brings to mind the concept of “agency” in art, is agency still relevant in producing outcomes in generative art systems? Or is there a shift in the role of the artist when it comes to generative art?

The Framework on my Generative Artwork ‘SOUNDS OF STONE’

Visual system:

Work Details

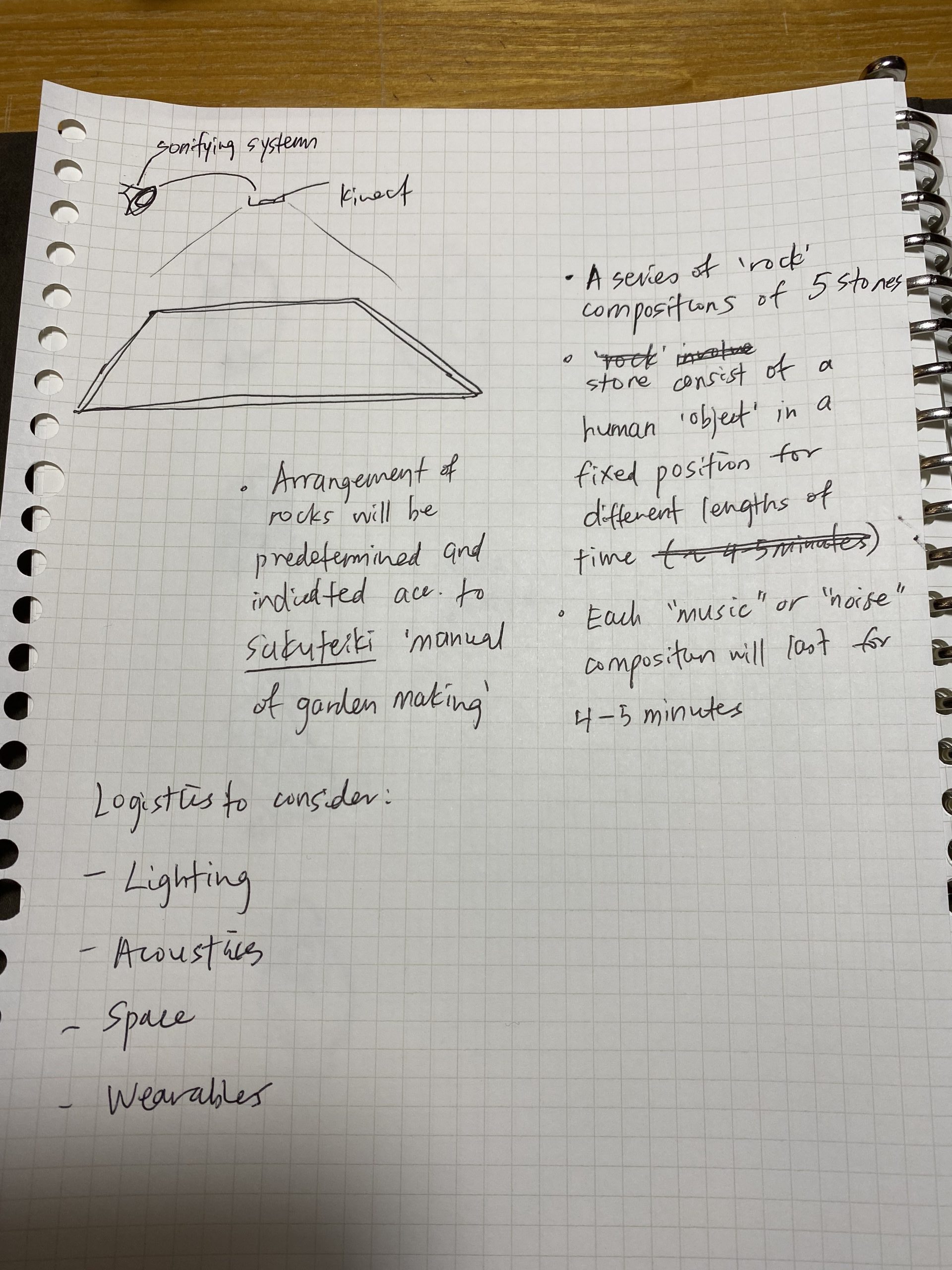

‘Sounds of Stone’ (2020) – Generative visual and audio system

Entities

Visual: Stones, Points

Audio: Stones, Data-points, Virtual synthesizers

Initialisation// Termination:

Initialisation and termination determined by human interaction (by placing and removing a stone within the boundary of the system)

Processes

Visual and sound states change through placement and movement of stones

Each ‘stone’ entity performs a sound, where each sound corresponds to its visual texture (Artist-defined process)

Combination of outcomes depending on the number of entities is in the system

“Live” where artist or performer or audience can manipulate the outcome after listening/ observing the generated sound and visuals.

Environmental Interaction

Room acoustics

Human interaction, behaviours of the participants

Lighting

Sensory Outcomes

Real-time/ live generation of sound and visuals

Audience-defined mapping

As the work is still in progress, I cannot evaluate the sensory outcomes of the work at this point. According to the classic features of generative systems used to evaluate Paragraph 7 (performative instructional piece) such as “emergent phenomena, self-organisation, attractor states and stochastic variation in their performances”, I predict that the sound compositions of “Sound of Stones” will go from being self-organised to chaotic as the participants spend more time within the system. Existing as a generative tool or instrumental system, I predict that there will be time-based familiarity with the audio generation with audience interaction. With ‘higher order’ interactions, the audience will intuitively be able to generate ‘musical’ outcomes, converting noise into perceptible rhythms and combinations of sounds.

Cambre Jacket — Made from a durable performance denim with quick drying properties.

Cambre Jacket — Made from a durable performance denim with quick drying properties.