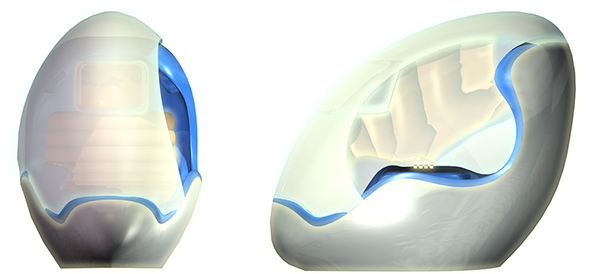

Google Glass, is an optical head-mounted display designed in the shape of eyeglasses, by Google X (now renamed X).

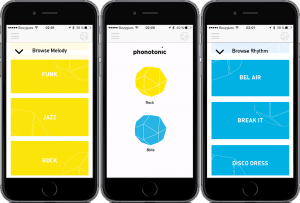

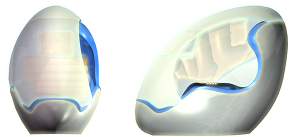

The Google Glass, when worn, displays information and allows the user to do various simple functions, eg. snap a photo, send messages or images, by activating a voice command or toggling the capacitive touchpad along the right side of the glasses. This information, in the form of images of text, will be overlaid onto a glass prism at the front of the glasses, without obscuring our current vision.

Watch how it works:

Other functions that the Google Glass does:

- Remind the wearer of appointments and calendar events.

- Alert the wearer to social networking activity or text messages.

- Give turn-by-turn directions.

- Alert the wearer to travel options like public transportation.

- Give updates on local weather and traffic.

- Take and share photos and video.

- Send messages or activate apps via voice command.

- Perform Google searches.

- Participate in video chats on Google Plus.

These information are then projected slightly above one’s line of sight;

Its metaphor, “looking into the future” is very suited considering the design of the product. The Google Glass eliminates an external device, eg. a phone or computer, and integrates it into a more convenient, consolidated device. However, I felt that its functions were only rudimentary, and does not warrant the $1,500 price tag.

[Cons] The price tag in question deters the common user, and ironically the product was made for the common man, in lieu of a commonplace/day item such as the handphone. Here, there is a mismatch in product function, with marketing cost of the product. 3 years after its debut, the Google Glass was deemed a failure for the mainstream market.

One particular function to note is that the feedback for the Google Glasses, is only limited to the user – eg the Google Glass is recording a live video, but other users will not realise it. Only users can see it from their projected, inner screens. Non-users may feel intruded upon, but perhaps this was what Google X wanted to achieve – a product that does not feel too much like a foreign product, hence they eliminated this feedback. It does not however bode well for other non-users, who may feel disengaged from the user himself. Another comment on the Google Glass was on its design – it looked too futuristic, and not so commonplace an item for one to use it daily, which ironically it was intended for that function.

Another design ‘fault’ which I disliked was that the projected screen was only situated on a single lens – I felt that if I were to use it, I would squint to focus on the screen – not very ideal, nor intuitive.

[Pros] Despite this, the product seems very intuitive – in navigation, wearing, and its outcome. Simple swipes (up, down, front, back) could be used to toggle the interface, making it easy to learn and manipulate. It’s worn over one’s eyes intuitively like a spectacles, and does not deter actual vision – a plus point. In addition, the function it offers, displaying a map, replying messages hands-free, creates a more ‘human’ experience without the need/feeling for another extended, foreign device.

Nevertheless, the Google Glass does exhibit unlimited potential, and can be used in more specialised fields, for instance telemedicine, teaching, or in conference calls or reporting. The unlimited potential of this Augmented Reality device can be tapped onto, and further adapted to suit our current needs.