Winzaw and I looked at some tutorials found on youtube and tried to mash some of them together.

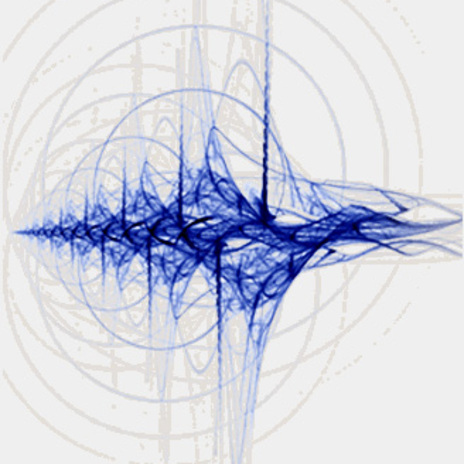

So the patch records voice for 8 seconds and playback in a robotic tune for twice over. and this audio playback will affect the particles to generate some visual feedback. Afterwhich the process happens again automatically. had to create a randomizer to randomize the voice playback so that it will alter the voice dynamically.

This is to see what we can do with particles and the audio feedback and how to combine. We will see how this can be applied to our ideas for the project

To see the rest of our progress: