Our team consists of Winzaw and Fabian.

Here is our progress in chronology:

21st Mar 28th Mar 31st Mar 4th Apr 11th Apr 14th Apr

Main Idea:

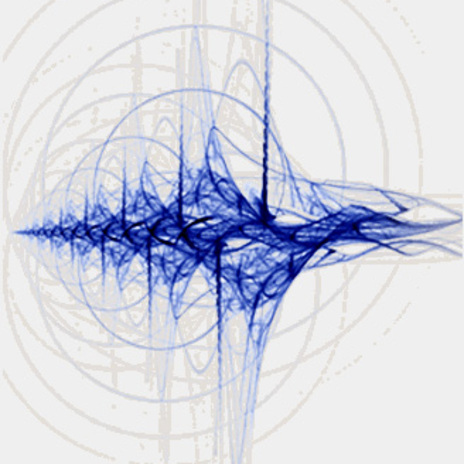

Our project will be split into two separate endeavors which eventually be combined into a seamless interactive experience. We are interested in the distortion of sound and images that respond to each other in a cohesive manner. This video is an example to illustrate.

Aims:

- Sound. We want to have the patch constantly recording and playingback things people say to it. So this will probably be the basis for interaction. No buttons or sliders, just purely saying stuff to the patch.

- Visual. Based on the pitch, frequency of the sounds playingback, we will get the jitter to generate visuals, either in the form of particle systems or in the form of real-time distortion of the images captured via webcam.

Timeline:

- 28th Mar. Working patch for sound recorder and playback (with/without distortion)

- 4th Apr. Working patch for visuals (i) in terms of particle systems responding to the sound recorded or (ii) in terms of distortion of the webcam grabbed image (if that is possible)

- 11 Apr. Connected patch for the two endeavors and fine-tuning the timing and sequencing for interaction