just a heads up im stuck

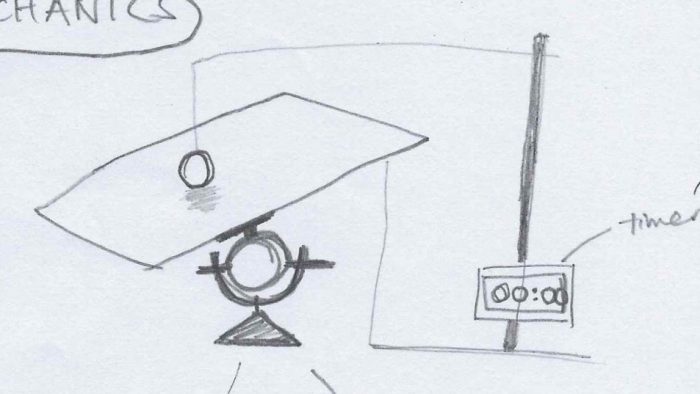

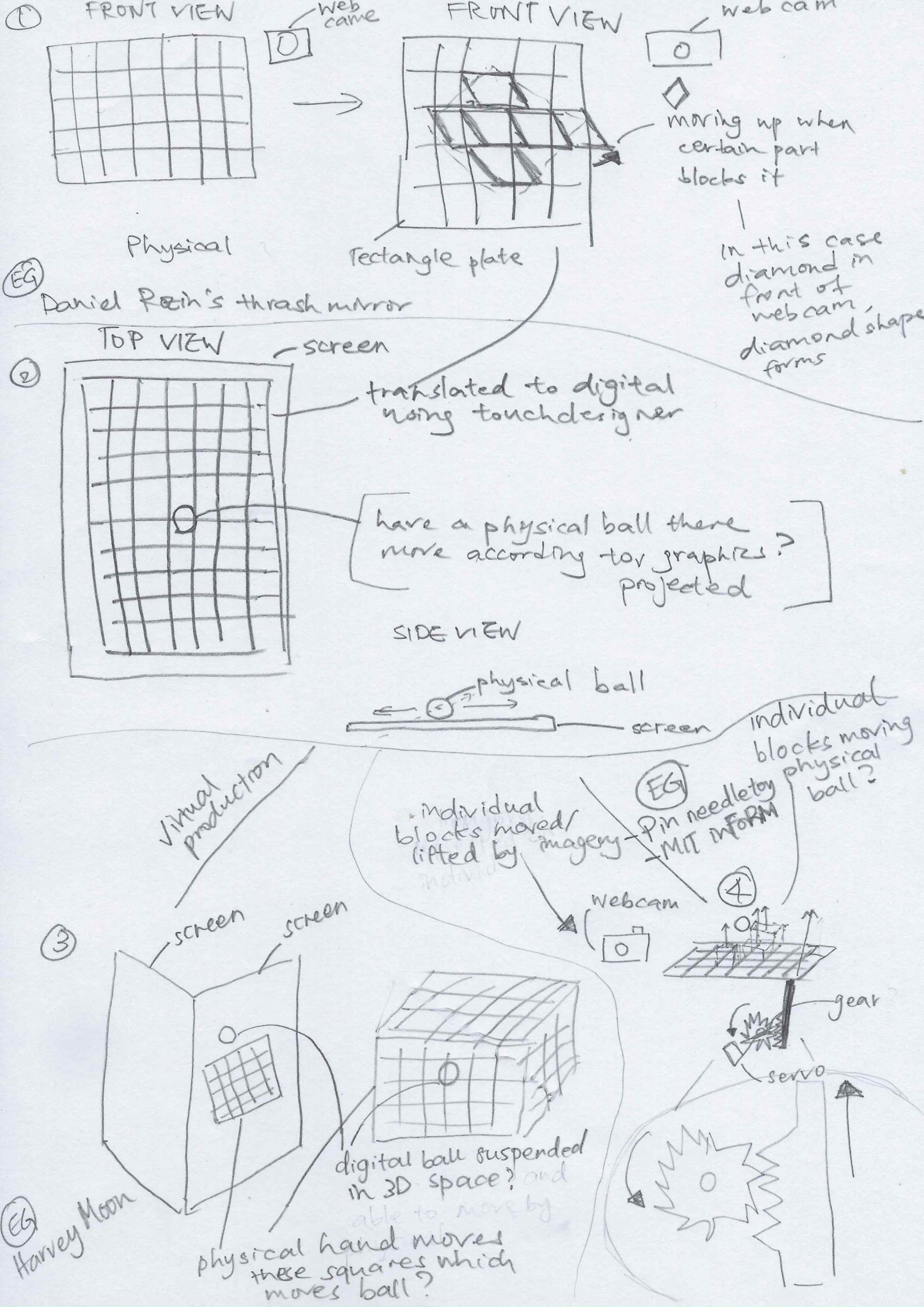

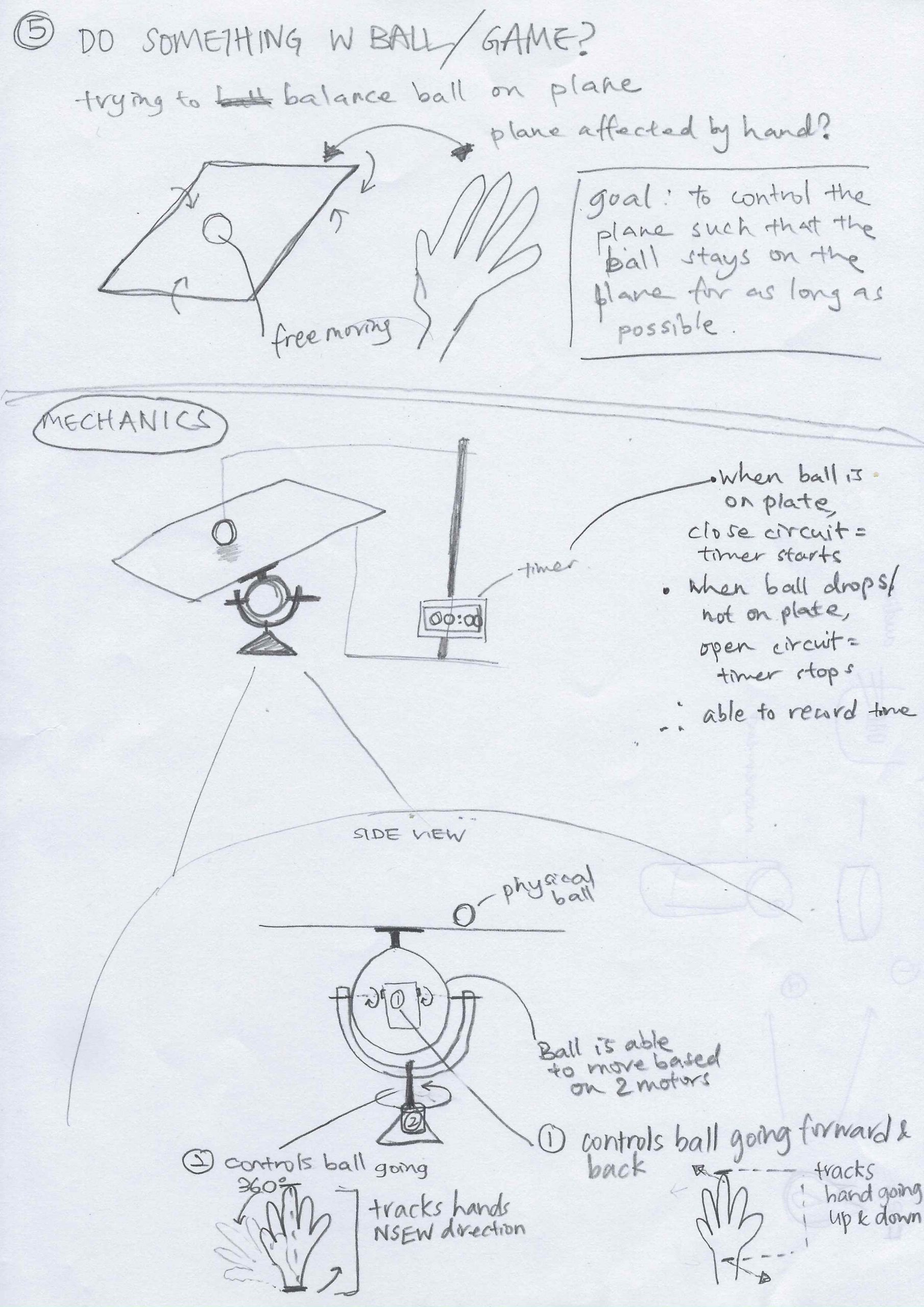

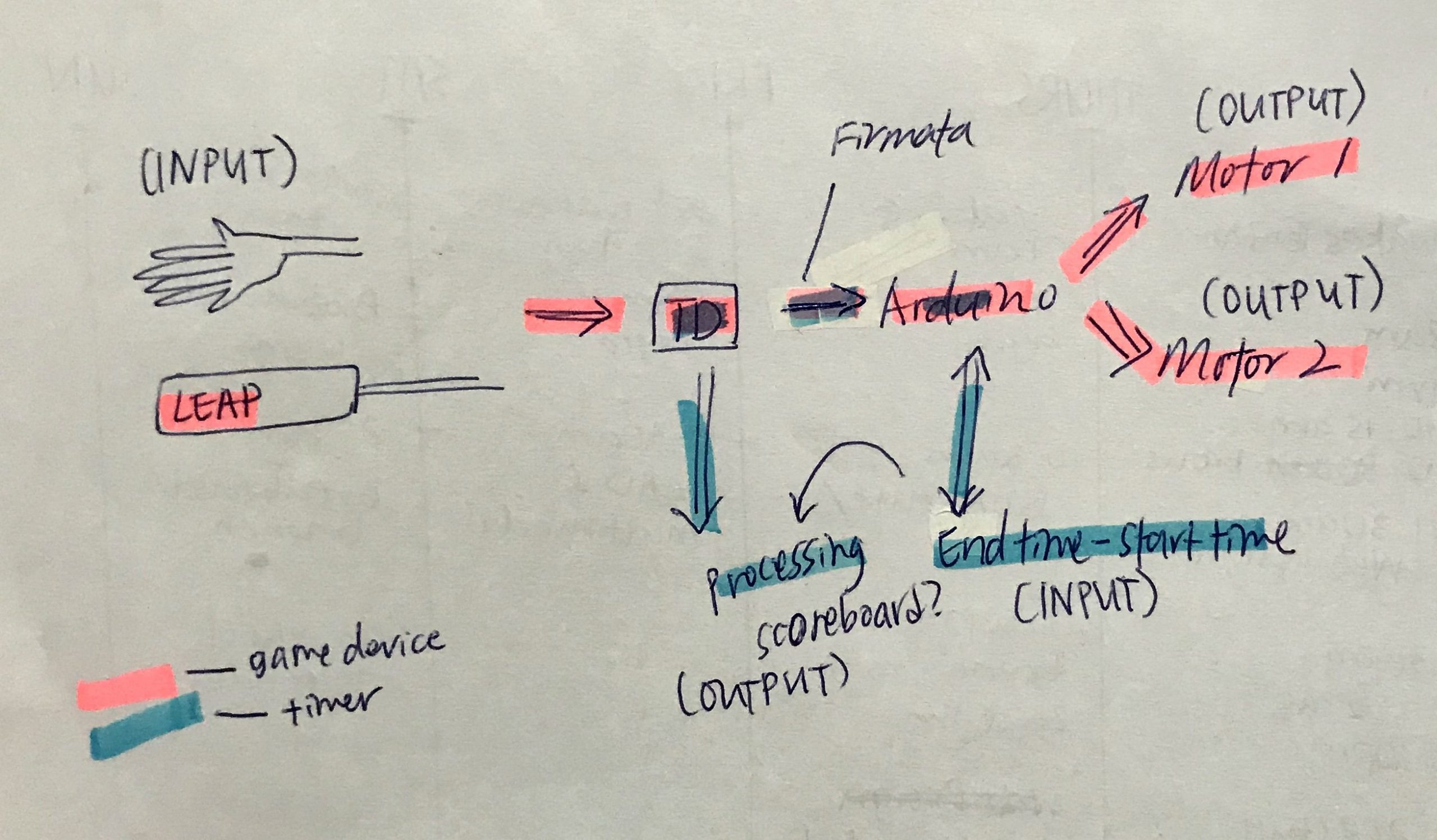

Right now, the goal is to have a camera to detect if the orange ball is still on the plane- if yes, timer runs/ if not, timer stops.

I have tried a few methods to achieve this but every one of it doesn’t work.

- Processing + Macbook Facetime HD Camera

Used the ‘Video’ library of Processing

Problem as per screenshot:

Unfortunately, I am on a macOS Catalina ver 10.15.5https://github.com/processing/processing-video/issues/134

Tried the solution as suggested by ihaveaccount on this above forum but the string doesn’t return as they said it wouldwithout the video library, this eliminates the use of external webcams also (i tried with my DSLR as a webcam)

- Processing + IP Camera

Since I was hoping for the webcam to be portable anyway, I tried to use an IP Camera.

Problem: On my processing sketch, nothing shows up, no error, no video feed show- nothing. IP camera doesn’t show it is being connected to anything either.

Not sure if its because im accessing processing via a Macbook and im using an android IP Camera app? - TouchDesigner + IP Camera

Used the Video Stream in, and with RSTP network protocol, while yes there is a video feed, it is SOOO choppy and pretty much only refreshes when I “pulse” the node - TouchDesigner + Web Camera

https://forum.derivative.ca/t/video-device-in-webcam-doesnt-work/12208/13

Once again seems like everyone else with Mac ver after 10.14, webcam doesnt seem to work

I did try with the latest beta ver of Touchdesigner however, it only works when I first start a timeline, once I save the file and close it and then reopen, its just a black screen again.

So now Im stuck. Im thinking maybe a Raspberry PI(since it runs Windows) with a webcam, or a camera module and maybe something along the line of python w OpenCV library, or it will be great if i could have processing on Raspberry PI. But this is a whole new set of problems because first I don’t have a Raspberry PI and just learning to work it is like 😵🤯😩.

Trying to put the devices around me also meant I couldn’t conceal a huge bulk of wire and I needed to make a lot of “long” wire to be able to reach from the controller to the user’s neck. Mess.

Trying to put the devices around me also meant I couldn’t conceal a huge bulk of wire and I needed to make a lot of “long” wire to be able to reach from the controller to the user’s neck. Mess.

In case of fire, the sensors placed around the house would detect heat and smoke.

In case of fire, the sensors placed around the house would detect heat and smoke. It will alert the user via the speaker on the fire extinguisher. It will also guide the user on how to use it.

It will alert the user via the speaker on the fire extinguisher. It will also guide the user on how to use it. When the fire extinguisher is activated, the nozzle is able to rotate and automatically find the source of fire using the heat detection camera.

When the fire extinguisher is activated, the nozzle is able to rotate and automatically find the source of fire using the heat detection camera.